Adding an AI Agent to an Existing APEX App

Introduction

Modern frontier LLMs are now reliable enough to support practical agent workflows when paired with strong orchestration and guardrails. Adding an agent to an existing APEX app allows you to:

Simplify user workflows

Simplify the user interface

Automate repetitive tasks

Leverage your existing data model, views, APIs, etc.

Get the experience of building agents with minimal investment

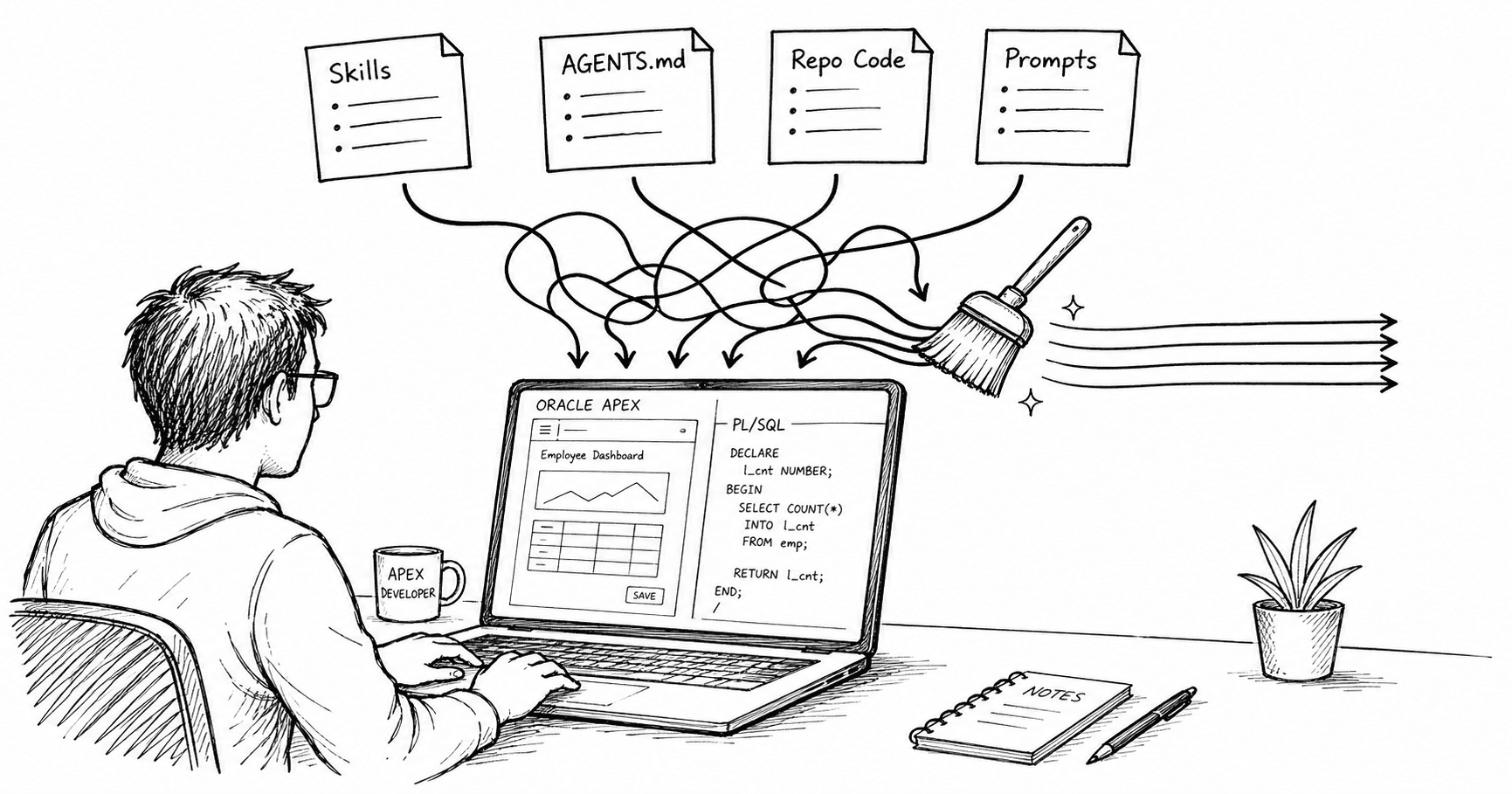

In this post, I will use an example of an APEX-based project management application I have been building for a client off and on over the past few years. Over time, it has grown to more than fifty pages and thousands of lines of PL/SQL. A month ago, we started on a project to introduce an AI agent to simplify the app.

Introducing AI Agents

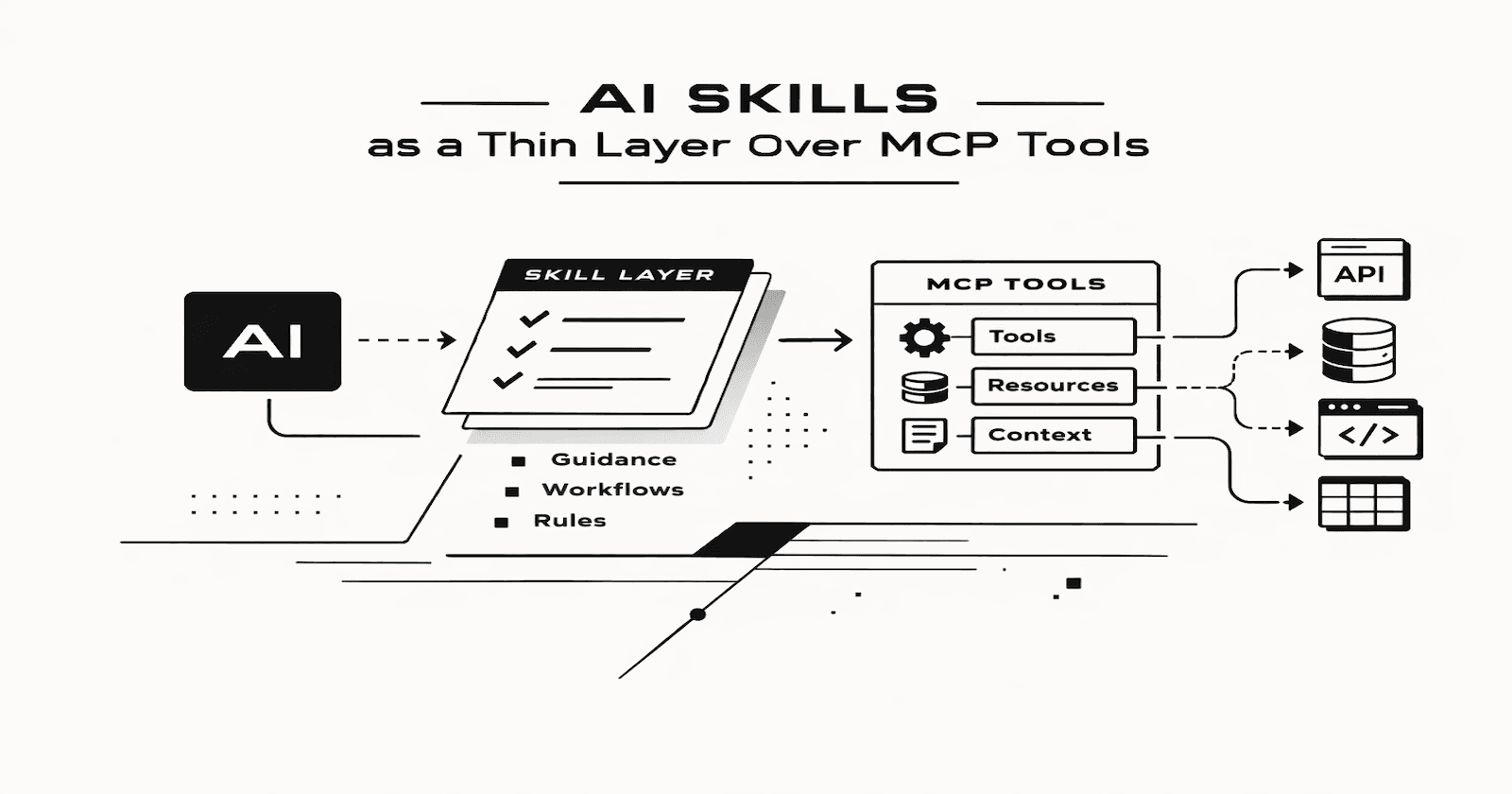

By combining a frontier model with tools (PL/SQL APIs, Web Services) and strong governance (permissions, auditing, guardrails), you can build an AI agent that executes multi-step business tasks, not just chats, safely within defined constraints.

Agents built for APEX use a PL/SQL framework to manage the 'Agentic Loop'. That's right; when you boil it down, an agent is a loop. Within this loop, the LLM makes suggestions as to which tools it wants to run; your code decides whether to run them. Your code is in control.

The diagram below illustrates the agentic loop.

The orchestrator controls the agentic loop, maintains state (in a database table) between tool calls, and decides when to hand control back to the user and when the loop should finish. The dispatcher receives tool requests, checks what data the user is allowed to see, performs schema and business validations, calls the tool, and returns the response to the orchestrator to feed back to the LLM during the next iteration.

This table illustrates the differences between a standard APEX approach and an Agentic approach:

Feature | Standard APEX Integration | Agentic Framework |

Logic Location | Hardcoded in Page Processes | Dynamic in Orchestrator Loop |

User Input | Structured (Forms/Pickers) | Unstructured (Natural Language) |

Validation | On-Submit / Client-side | Dispatcher-level / Pre-execution |

Flexibility | Rigid workflow | Multi-step "Reasoning" capability |

Security | Session/ACL based | ACL + Intent Validation |

Simplifying Project Management

So, let's get back to the project management app use case. The app handles everything related to project management, including questions, risks, issues, requirements, design documents, emails, and meeting notes. As the app grew and we introduced new pages, fields, and buttons, users started to get frustrated by how long it takes to navigate to where they need to go. I am sure Jira users can relate!

Agent Scope

For phase one of this project, we decided to limit the scope to allowing users to inquire about, create, and update project questions, risks, and issues.

Too often, we try to cover every use case and end up shipping nothing. That risk is higher with emerging technologies, where outcomes are uncertain. Narrowing scope doesn’t mean lowering the bar, it means delivering a genuinely useful slice that also proves whether the technology will scale to the entire app.

Tools

We gave the Agent the following tools:

Show the project structure. This tool provides the information about the project, such as the customer, the project's structure, the project lead, etc.

List project team members. This tool provides details of all the people associated with the project. This is used by the LLM to assign tasks, return tasks for specific people, etc.

Search questions, risks, and issues. This tool allows users to perform searches using assigned to, status, question, risk, or issues text (via Vector Search).

Create questions, risks, and issues

Update questions, risks, and issues

Each tool is a PL/SQL function or procedure that either returns some JSON or performs an action. Because we started with a fully functional App, we were able to leverage existing tables, views, and PL/SQL APIs.

One of the most underrated parts of designing tools is the descriptive tool metadata that you send to the LLM with your prompt. The tool call metadata must clearly describe each tool along with its parameters. You should also resist the urge to include too many tools with each request to the LLM. Only send the tools that are relevant to the activity you are trying to perform. This prevents the LLM from having to look through and 'understand' tools it will never need.

The Orchestrator

The orchestrator is a PL/SQL procedure that controls the agentic loop. Essentially, the process involves looping, calling tools, and providing results back to the LLM until the user's request is completed (i.e., no further tool requests from the LLM).

We use a database table to maintain state between tool calls. This table allows us to re-construct the chat history and pass it to the LLM with each API call.

The Brain

The orchestrator passes a system prompt to the LLM during each request. This acts as the agent's brain. The system prompt provides background about the Application, the agent's objectives, rules for tool use, required behaviors, and how responses should be formatted.

Dispatcher

When the LLM requests a tool, the dispatcher does the following:

Verifies the tool exists

Verifies the validity of the parameters the LLM requested

Verifies the user has access to the action, and or data being requested

Executes the PL/SQL Function or Procedure

Shapes the JSON response and hands it back to the Orchestrator to pass back to the LLM

Creates and Updates

With an emerging technology like this, you may be nervous about allowing the AI to request tool calls that create or update records in your database. Sure, you write the tool so you can ensure whatever record is created is valid, but what if the AI decides it wants to create 100 valid records when it should have been just one? To allay fears, we set up the agent and the tools with a flag indicating if a particular tool call requires human approval before it can run. Initially, we set all the create/updated tools to require human approval. Down the road, we expect that we may want to turn this confirmation off for some write tools.

Vector Search

I briefly mentioned that the Search tool uses Vector search so users can perform semantic searches on the text from questions, risks, issues (and the associated answers/responses). This allows users to perform powerful searches from a prompt. e.g., 'Find Open questions assigned to Jon related to California'.

We established a queueing mechanism that queues new and updated content. An APEX Automation picks up the queued content every 15 minutes. The automation chunks the content using the SQL function VECTOR_CHUNKS. The chunks are then vectorized using the SQL function VECTOR_EMBEDDING. We use the ONNX model ALL_MINILM_L12_V2 (available here) in the database to create the embeddings (vectorize the chunks).

Instrumentation

One of the most useful things we included at the beginning is comprehensive logging and diagnostics. Every LLM API call, every tool request, and every tool response is logged for each conversation. This allows us to replay conversations for audit and troubleshooting purposes.

It even allows us to troubleshoot strange behaviors using AI. For example, using the Oracle SQLcl MCP tool connected to the Codex App. I can say something like, "Review conversation ID 123 and find out why only 1 of the 3 provided issues were created by the agent." Codex can then use SQLcl to query the conversations table, and iterate until it determines whether the issue is code-related or system-prompt-related.

Lessons Learned

Never trust the model to handle security or data integrity. Your code (orchestrator and dispatcher) and your data model should handle them. Always!

Log everything and make conversations replayable for audit and troubleshooting.

Make tools configurable to easily toggle human-in-the-loop confirmations.

When writing CRUD PL/SQL APIs, don't assume the consumer is APEX. Your PL/SQL APIs must be hardened to handle calls from unexpected future sources, such as agents.

Watch the context. Each call to the LLM passes the completed conversation history. As a conversation builds (especially if you have multiple tool calls returning large amounts of JSON), the LLM has to wade through more and more context to figure out what the latest request is. Consider capping the number of turns or preventing further turns after the context reaches a certain size.

Enable parallel tool calls when calling the LLM API to reduce turns (switching between the user and the model in the Agent Loop). For example, if you want to copy-paste 10 questions to add, enabling parallel tool calls allows the agent to request that the create tool be called 10 times in one turn rather than one at a time. This allows the user to confirm creation of the items once, not 10 times, and reduces token usage. Parallel tool calls also reduce the amount of time the user must wait for their request to complete.

When something fails (e.g., you get a PL/SQL exception during a tool call), do not pass the Oracle error message back to the LLM. Instead pass something meaningful like "the project team tool is not responding". This allows the LLM to fail gracefully and inform the end user.

Do not allow your agentic loop to run forever. Set a maximum number of iterations where you end the loop no matter what. Make this configurable so you can adjust it during testing.

Each LLM API call takes between 2 and 10 seconds to run. If the agent has to call the LLM several times during a request, the overall duration can add up quickly. You can influence this by playing with the model and the reasoning level (the higher the reasoning level the more the model thinks and the longer it takes). Use the fastest model with the lowest reasoning level which still gives good results for your use case. You can also help by improving the user experience while they wait. As you will see in the demo below, we took the time to build a custom blocking spinner that is displayed while the agent is working.

Demo

A picture is worth a thousand words, as they say. This short video shows a typical session with the Agent.

Projects are structured by sections and sub-sections.

The context area provides context for the user's prompt.

I did not show it in the demo, but the response includes links that let users open Questions, Risks, and Issues directly from the agent. This makes use of existing APEX pages.

As you can see, the user can take a question through its full lifecycle without leaving the page. This demo only shows you a fraction of what is possible with just five tools. Some other sample prompts:

Find questions related to VAT Tax

- The search tool uses vector search to find questions, risks, and issues related to VAT.

Review the attached meeting transcript (copy-paste it into the Context field), extract all questions, risks, and issues, and organize them into subsection 20.

- This is pretty powerful. We used the LLM to analyze the meeting transcript and extract all questions, risks, and issues raised during the meeting. The LLM extracted them and then invoked the create tool multiple times to populate the database with questions, risks, and issues.

Behind the Scenes

Privileged users can enable diagnostics. Diagnostics show all of the records tracked during the conversation, including requests for tool calls and responses from tool calls. In the screenshot below, you can see the diagnostics for one turn from the demo video. The diagnostic records are identified with the brown '...' avatar.

We submitted a request, "answer question 110662 with yes"

The model took the question along with the system prompt and broke out the answer "yes" from the request. It then looked at the provided tools and requested that we call the

qri_searchtool to find the questionWe ran the tool (after checking the user had access), and returned the JSON result containing details of the questions we found

The LLM interpreted this tool response JSON, confirmed there is just one question, then requested we call the

update_qritoolThe

update_qritool requires a human confirmation, so the Orchestrator saved the tool request from the model and stopped to allow the user to click the Confirm/Reject button.After clicking Confirm, the Orchestrator called the LLM one last time with the result from calling the

update_qritool.The LLM decided it didn't require any more tool calls, which ended the turn.

Conclusion

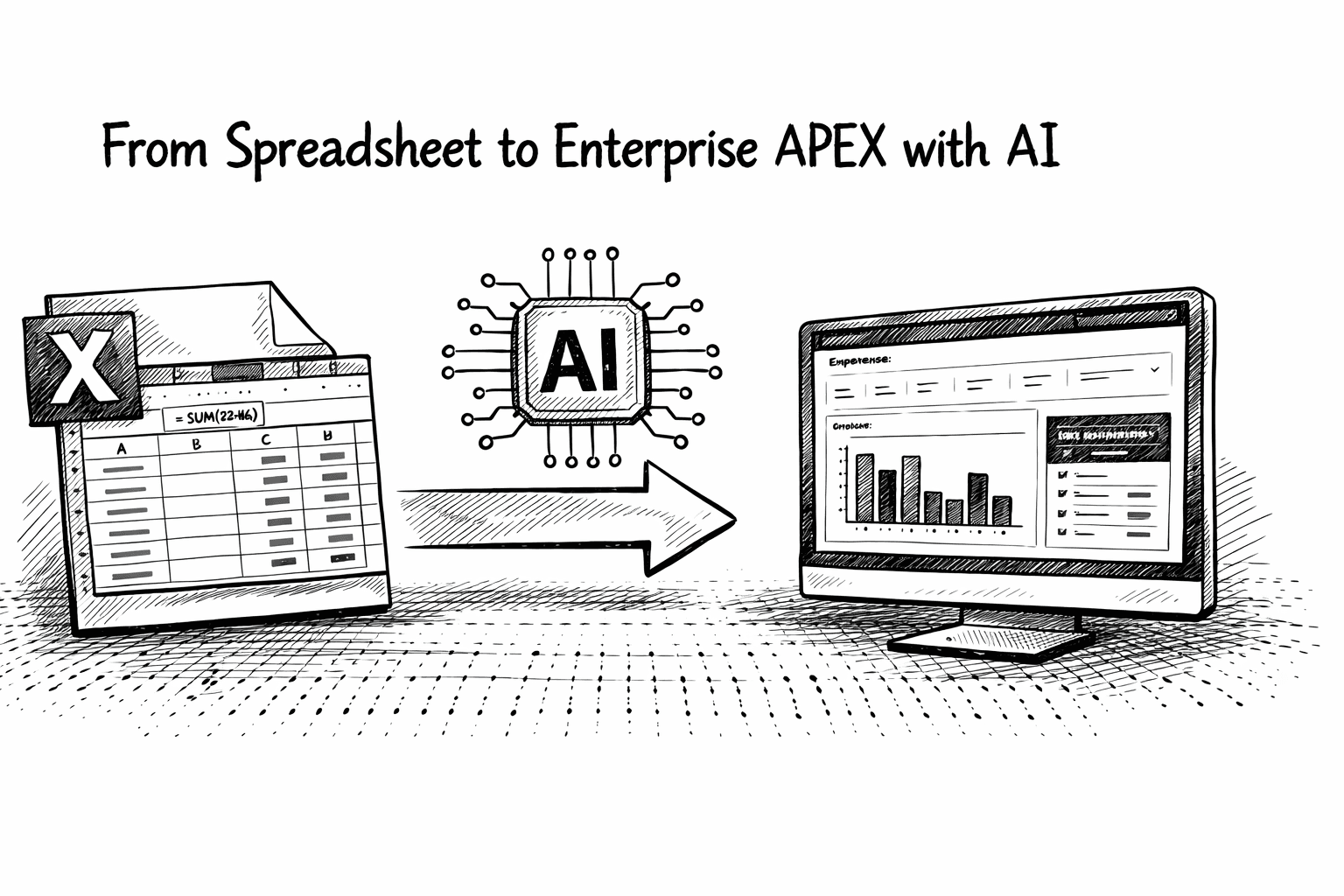

Adding an AI agent to an existing APEX application is a practical way to introduce AI capabilities without rewriting your system. Most applications already have the hard parts in place: a data model, business APIs, validation logic, and security rules. An agent simply becomes another consumer of those APIs.

The key is to keep the architecture straightforward. Let the LLM interpret user intent and suggest actions, but keep control in your code. The orchestrator manages the loop, the dispatcher validates and executes tools, and your existing PL/SQL APIs enforce business rules and data integrity.

Start with a limited scope, a small set of well-defined tools, and strong instrumentation. Once the architecture is in place, you can expand the agent’s capabilities incrementally.

In our case, just five tools were enough to let users search, create, and update project questions, risks, and issues directly from a chat interface. The result was a simpler workflow for users and a new way to interact with the application without changing the underlying system.

For teams already building applications with Oracle APEX, agents are a natural extension of the platform. The important part is not the model, it is the architecture around it.