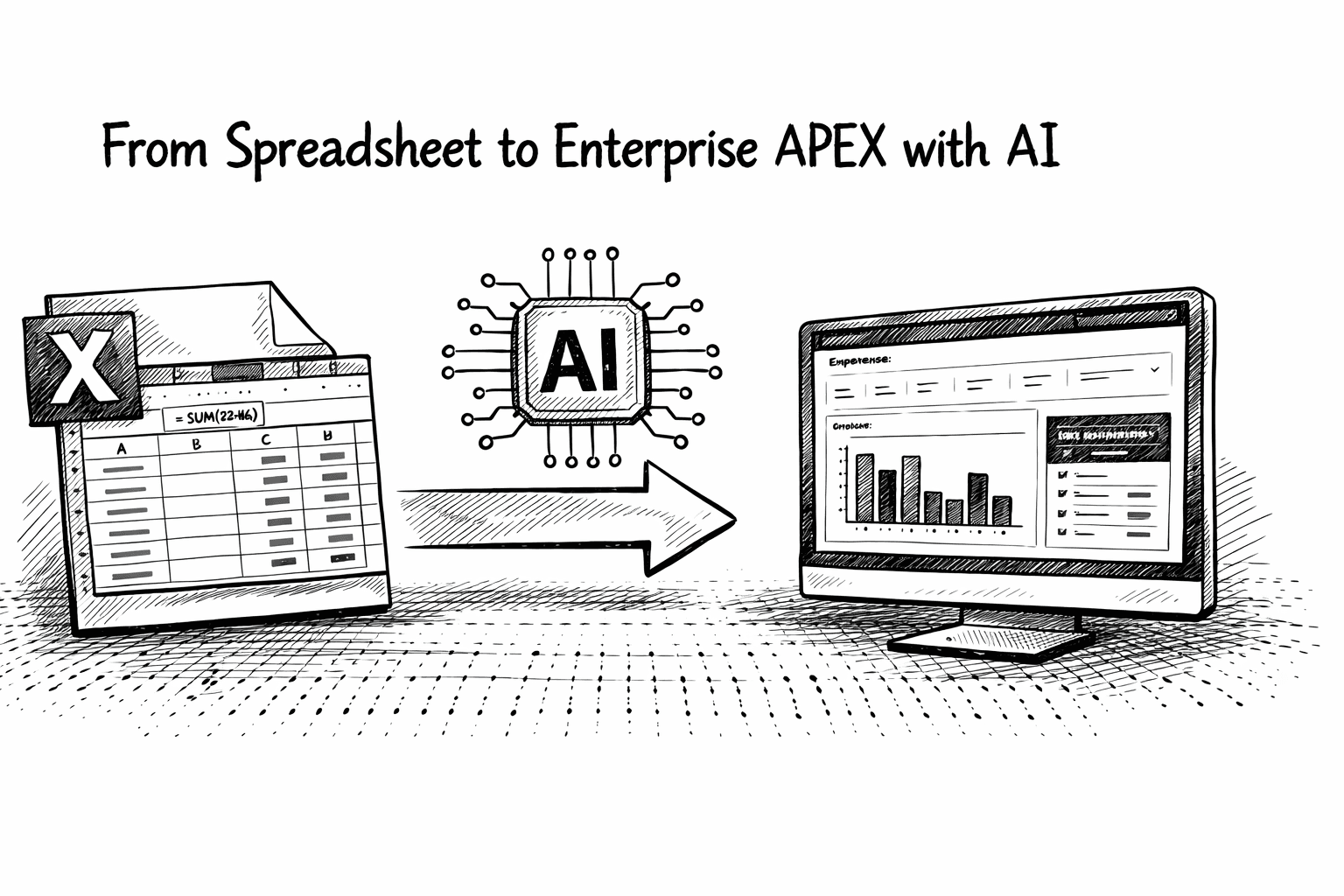

An AI Shift for APEX & PL/SQL Developers

Introduction

I have been using AI to help me build APEX Apps for well over a year now, and I’ve shared a lot about the tools and workflows I use. But something happened this week that felt like a genuine shift.

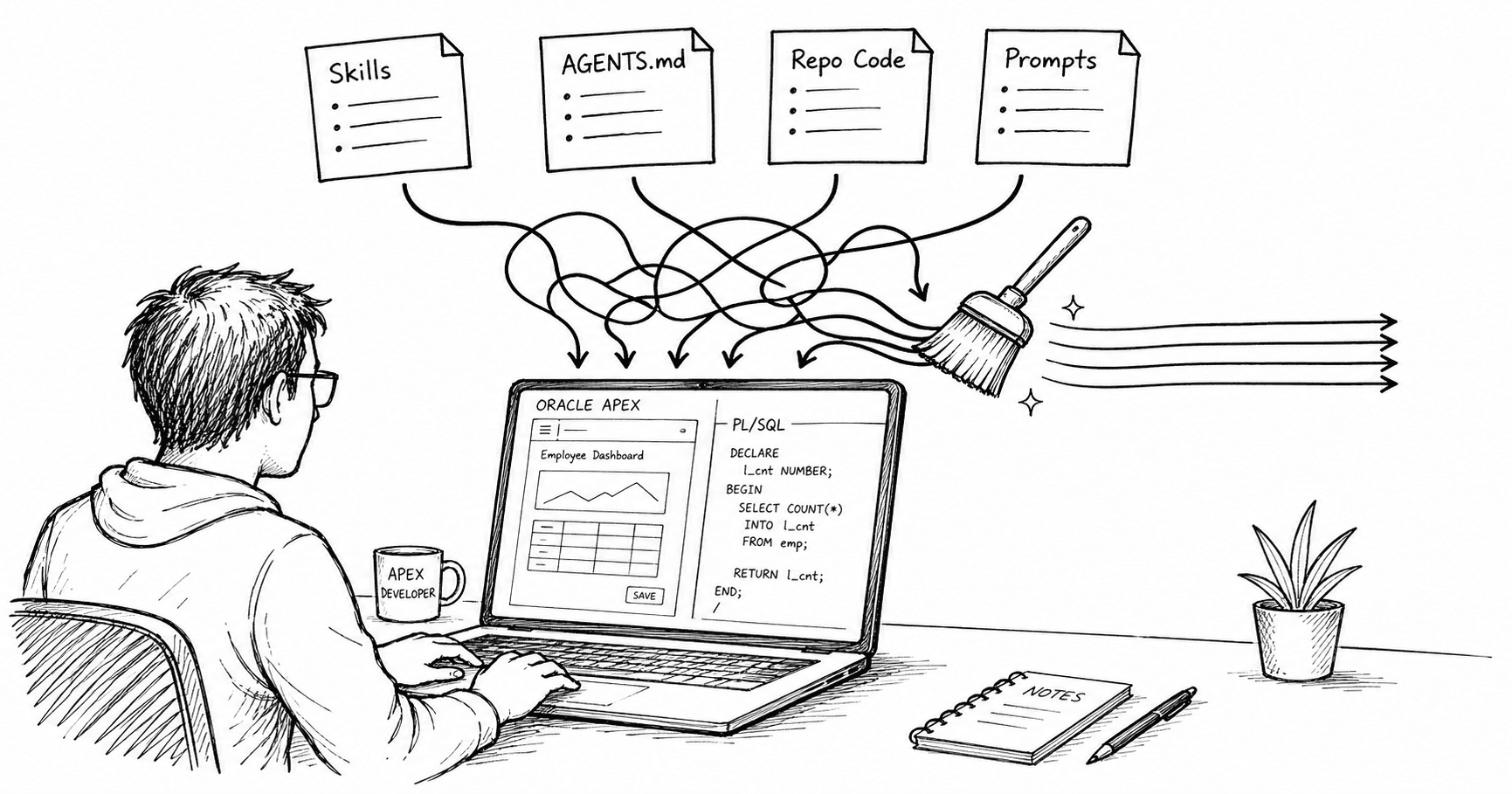

Usually, I use AI for things like autocomplete, understanding a codebase, and code reviews. I even use it for creating new procedures and functions, but the results vary in quality. This week, on two separate occasions, I had the AI generate hundreds of lines of PL/SQL, resulting in production-ready code (after review and testing). It included validations, handled edge cases I hadn't explicitly listed, and followed my approach perfectly. In this post, I want to break down the specific factors that made these efforts successful, even as others failed.

Metadata: The AI’s Rosetta Stone

The first key to this success wasn't the prompt; it was the database schema.

Clear, unambiguous column names.

Tables and columns have clear, plain English comments.

Foreign Key Constraints that clearly define the table relationships.

Check Constraints to define valid values for columns, where possible.

NOT NULL Constraints to identify which columns must be populated.

Unique constraints / natural keys.

Because I provided the AI with the DDL (including this extra metadata), it didn't just see a table; it inferred far more of the business rules with fewer guesses. It knew that a column named STATUS_CODE wasn't just a string, but a state-machine driver. When the metadata is clean, the model makes fewer incorrect assumptions.

The Spec is the Work

If I spend all this time writing a detailed spec for the AI, I could have just written the code myself.

This is something that I used to think until I realized I was wrong on two counts:

In most cases, I have to write a spec anyway, so the client can review it and I can be sure I am on the right path.

A well-thought-out spec can make the difference between average and near-perfect results when an LLM is generating code.

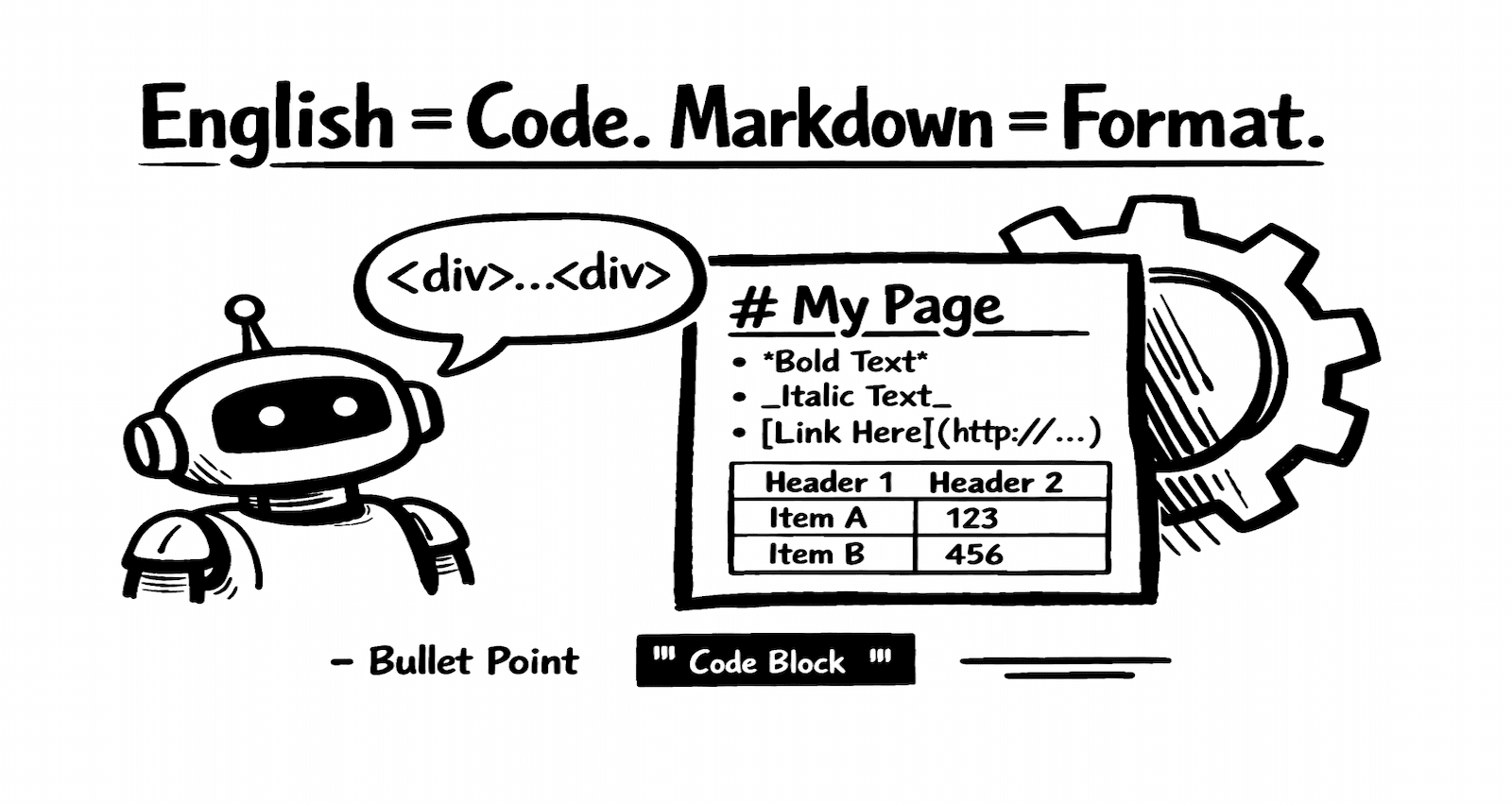

I did change the way I write my specs. I now write them in Markdown (using Obsidian) and export to Word using an Obsidian plugin that runs Pandoc if the client needs a Word copy. I also annotate the spec with hints for the AI, such as table names and references to procedures that perform similar logic. I make these annotations using HTML comments, which Pandoc excludes when exporting to Word.

Here is an example excerpt using HTML comments for annotations:

The App should then create a child RFQ and RFQ lines for each supplier.

<!-- AI > Use tables: SPTL_RFQ_SPLR_HEADER and SPTL_RFQ_SPLR_LINE -->

Leveraging Existing Patterns

In my case, AI wasn't starting from a blank slate. I was adding code to an existing package, and I also had other packages in the repository that the AI could reference for context.

In one instance, I had a specific pattern established for processing file uploads:

Upload records from Excel into an

APEX_COLLECTIONusingAPEX_DATA_PARSER.Run validations to check the uploaded records for errors.

Allow the user to review the validated records before final processing.

Perform the final import into the base tables.

I pointed the AI to two existing procedures that followed this pattern and said, "Follow the pattern in procedures X and Y, but apply the logic from the specification below…".

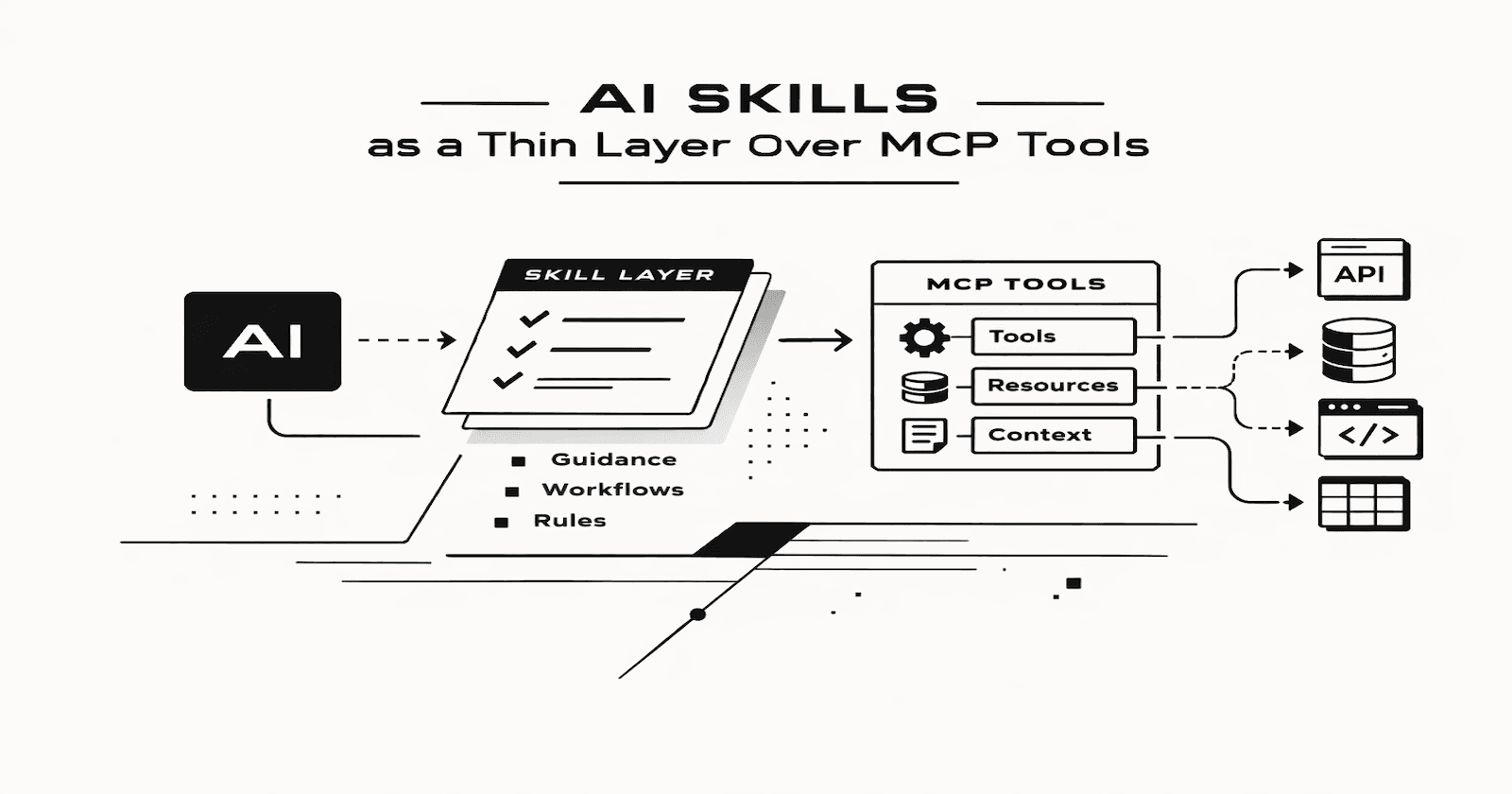

The Power of AGENTS.md

The final piece of the puzzle was the use of AGENTS.md. AGENTS.md is a file containing instructions that many coding agents, such as Codex, Cursor, and Claude, pass to the LLM along with your prompt. My AGENTS.md files are constantly evolving, but they typically include instructions like:

Use APEX PL/SQL APIs where possible

APEX_STRING,APEX_JSON, andAPEX_DEBUGover custom logic.Use set-based logic where possible instead of FOR loops.

Avoid Dynamic SQL wherever possible; if unavoidable, always use bind variables and validate identifiers (e.g., DBMS_ASSERT) to reduce SQL injection risk.

The folder structure of the codebase.

Prefer

%TYPEand%ROWTYPENo hard-coded schema names.

Always include

APEX_DEBUGcalls in exception handlers and major logic branches.Code formatting rules.

etc.

Without this file, the AI defaults to "generic" PL/SQL. With it, the AI becomes an expert in my specific preferences and standards.

Warning!

As I’ve said before, AI is a tool, not a crutch. The code you build is your responsibility (not the AI’s). For now, at least!

Understand the Output: Before committing the code, you should understand what it does and that it is doing what it is supposed to do.

Security is Your Job: I still manually check security settings at the end of every project. AI can find vulnerabilities, but it shouldn't be the only one looking.

Test, test, and test again: AI does not replace testing, though it can help with it.

The AI-Generated Code Checklist

Clean DDL + constraints + comments included

Markdown spec with rules + edge cases

Reference 1–2 existing “golden” procedures

Repo instructions (AGENTS.md)

Run tests + security review + performance sanity check

Conclusion

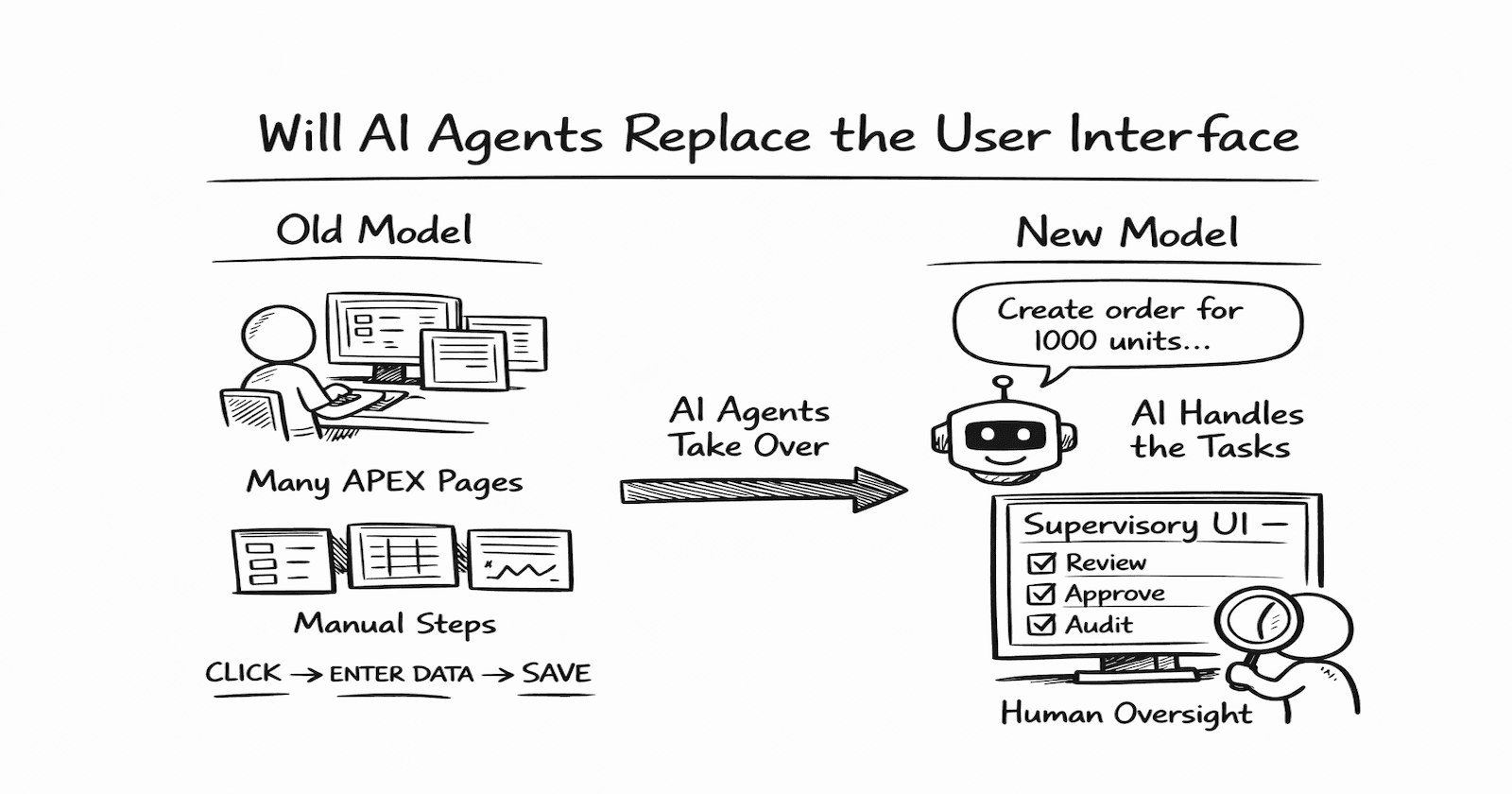

This week proved that we are moving toward a world where the APEX Developer acts more like a conductor than a member of the orchestra. I think I am OK with this, but it does take some getting used to.

If your database design is solid, your patterns are consistent, and your requirements are clear, the actual coding becomes a commodity. The AI didn't just save me time; it allowed me to stay in the "flow state" of designing the solution rather than getting bogged down in the syntax of a 300-line package body.

If you haven't reached this inflection point yet, stop focusing solely on the "prompt" and start considering the context you provide to the AI.

Exciting times ahead!