Will AI Agents Replace UI, or Redefine It?

Introduction

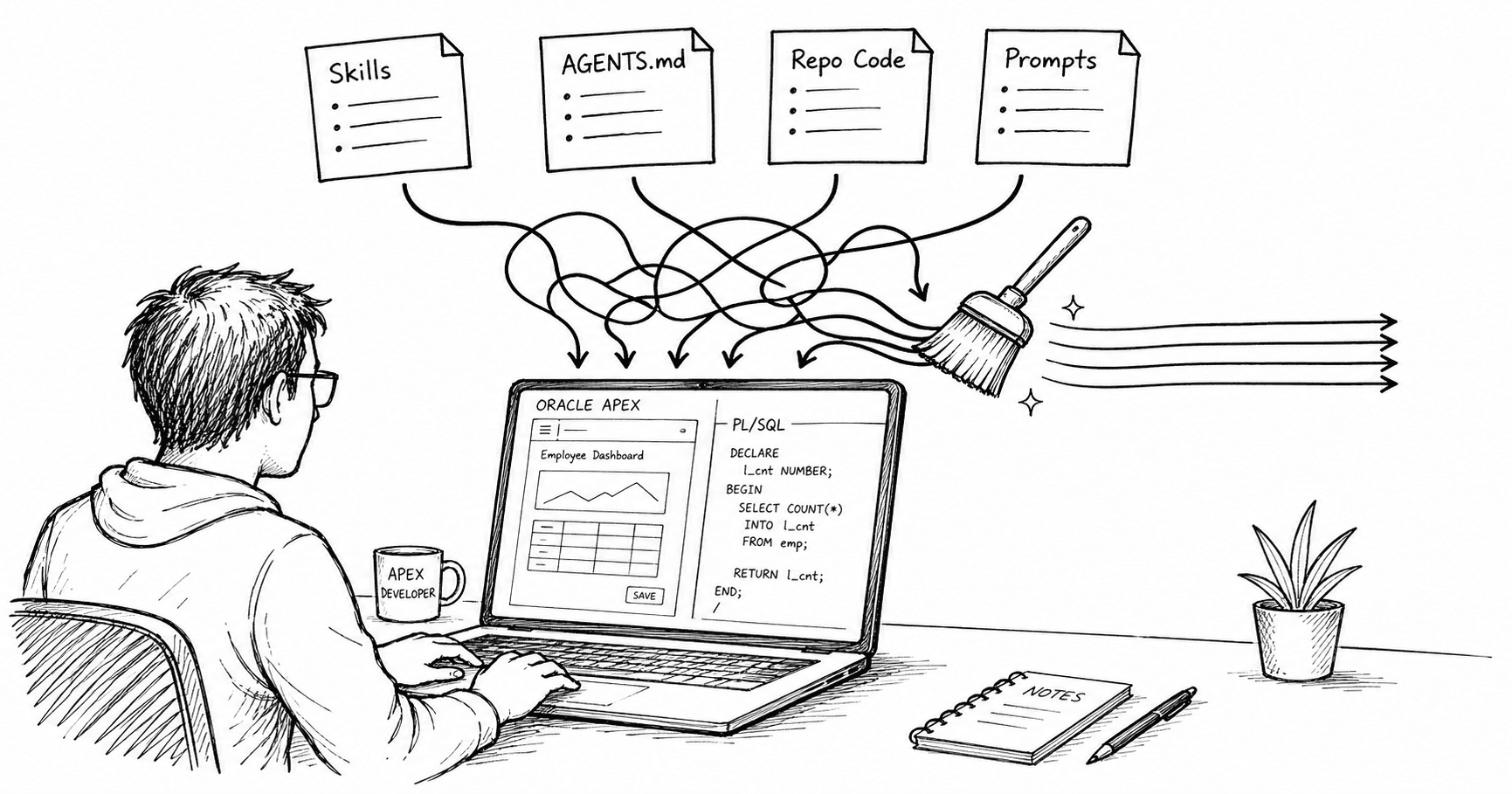

In a previous post, Adding an AI Agent to an Existing APEX App, I described how I added an AI agent to an existing APEX app. The goal was to simplify the user interface by providing an agent driven by a simple text interface.

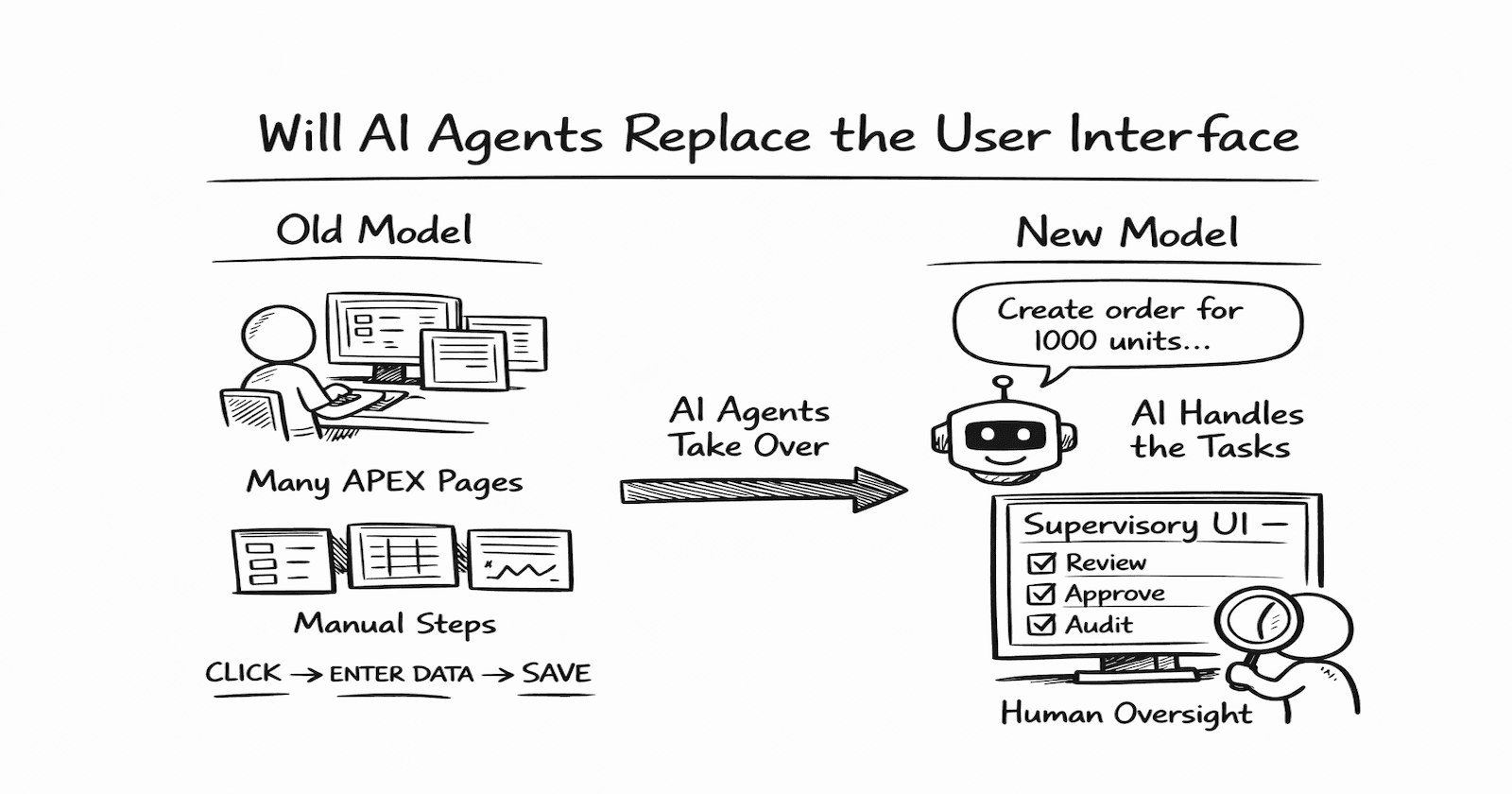

As an APEX developer, this got me thinking: Are we heading towards a future where there is less focus on building APEX pages and more focus on building AI agents and the controls they require? Could agents completely replace UI?

I do not think agents will replace the user interface. But I do think they will redefine it.

Why enterprise apps look the way they do today

For years, we have built APEX apps around a simple assumption: a user operates the app. They open a page. They find the right menu. They enter data into a form. They click save. Then they move to the next screen and repeat.

That model is so familiar that it feels permanent. But it is not. It is mostly a workaround for the fact that traditional software has needed humans to drive every step.

If an agent can understand an instruction, gather context, decide what steps are required, and carry them out across one or more systems, what exactly is left for the user interface to do?

That is no longer a theoretical question. It is becoming a practical one.

A lot of enterprise software still revolves around transaction entry, status updates, approvals, routing, and repetitive record management. In many cases, the interface is not valuable because it is a great experience. It is valuable because it is the mechanism the system uses to make the user do the work.

That is where agents become disruptive.

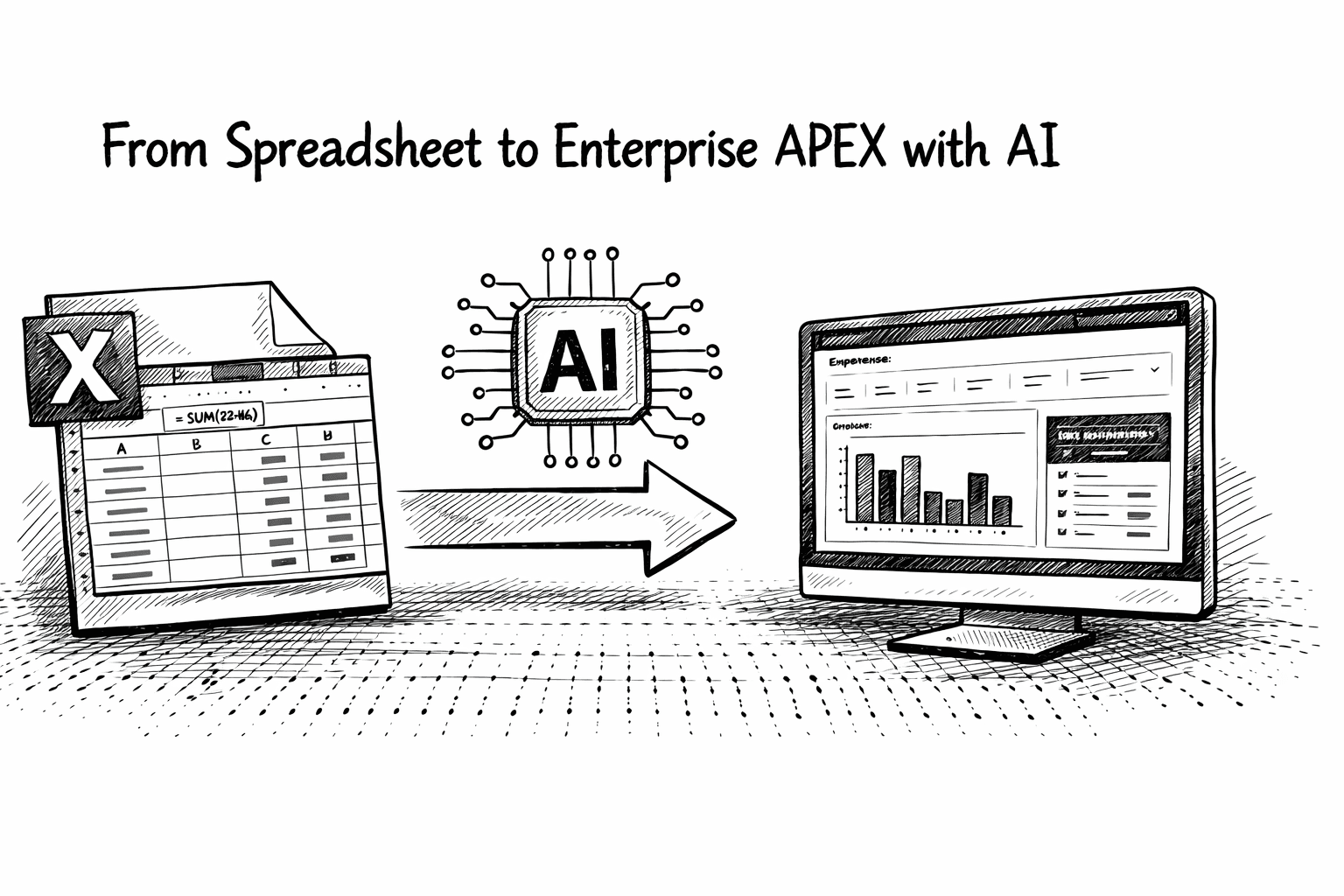

Instead of forcing a user to navigate five screens and populate twelve fields, the interaction could start with something much closer to natural intent:

Create a new customer for Acme (details for Acme can be found in the CRM system), generate a sales order for 1,000 Aztec 100's using standard new customer pricing, send it for approval, and remind me next Tuesday if it has not been signed.

We don't need many APEX pages to implement this!

From manual operation to delegated execution

The most important change is not that software becomes conversational. The real change is that software no longer requires the user to translate business intent into system steps.

That translation has defined enterprise UX for decades. Users have had to know where to go, what fields matter, what sequence to follow, what validations apply, and which screen comes next. The interface has been the place where human intention gets broken down into machine-friendly actions.

That means the APEX app no longer has to be organized primarily around pages. It can be organized around goals, actions, and outcomes.

That is a major shift.

Where the “single text box” idea becomes useful

Once you accept that agents can handle more of the operational work, the obvious next question is whether the app can be reduced to a simple prompt box.

Probably not, but for some tasks, that actually makes sense.

Routine work is a strong candidate:

Create a new supplier using the attached Invoice.

Open a support request for this issue...

Summarize sales by cost center for last month and compare it to this time last year

Put a credit hold on Acme Corp

Inactivate Item ABC

In those cases, the old interface often exists only because the system required structured, manual interaction. If the agent can reliably handle that structure, the screen becomes optional.

That is why this topic is not far-fetched. It points to a real weakness in much current software: too much of the interface exists because the software is rigid, not because the user actually benefits from the interaction.

The future is probably not just a text box

A text box is excellent for expressing intent. It is weak for verification, comparison, supervision, and control. That matters.

It is easy to say:

Reconcile these transactions and close the period.

It is much harder to trust that outcome without seeing:

What exceptions were found

Which records were changed

What assumptions were made where confidence was low

What could not be completed cleanly

What still needs human approval

That is why I do not buy the lazy version of the argument that “UI is dead” or that “everything becomes chat.”

The better argument is that AI agents may eliminate a large percentage of the UI that exists purely for manual execution, while making a different kind of UI more important than ever.

The UI does not disappear. Its job changes.

I think that is the real story. The interface of the future is less about entering data and more about supervising action.

That means the valuable parts of the UI become things like:

previewing what the agent is about to do

approving consequential actions

inspecting reasoning or decision traces

handling exceptions

reviewing changes across systems

enforcing policy and permissions

reversing or correcting bad outcomes

understanding what happened and why

That is still UI. It is just no longer centered on the idea that the user must manually drive every step of the workflow.

In fact, once agents take over more of the mechanical burden, the remaining interface becomes more strategic. It becomes the place where trust is earned.

Enterprise systems will change unevenly

Some interfaces are much more vulnerable than others.

Low-risk, repetitive, high-volume workflows are the easiest targets. Administrative tasks, routine service requests, report generation, standard approvals, record creation, and straightforward updates are all likely to be heavily compressed by agentic interaction.

Finance, healthcare, procurement, compliance, and regulated workflows require more than just correct execution. They need visibility, auditability, traceability, and control.

In those environments, the agent may do more of the work, but the interface is not going away. It is becoming the control surface.

That is a very different design challenge from building page flows and forms, and it is more interesting.

What does this mean for us?

For a long time, the default design question has been: What pages do we need? That question is starting to look outdated.

A better set of questions is:

Which parts of this workflow truly require human judgment?

Which inputs are genuinely necessary?

Which fields exist only because the system cannot infer context?

Where can intent replace navigation?

Where can the agent act safely on the user’s behalf?

What needs to be visible before a human will trust the result?

How do we design for intervention, not just execution?

That changes how we think about app design.

It pushes us away from page-centric systems and toward systems built around delegation, observability, and recovery.

For enterprise platforms in particular, that is a serious shift. The future is not just better forms. It is designing the boundary between autonomous action and human control.

What happens to APEX?

If the future of enterprise software relies on agents executing tasks and humans supervising them, APEX is still positioned well; if we change how we build.

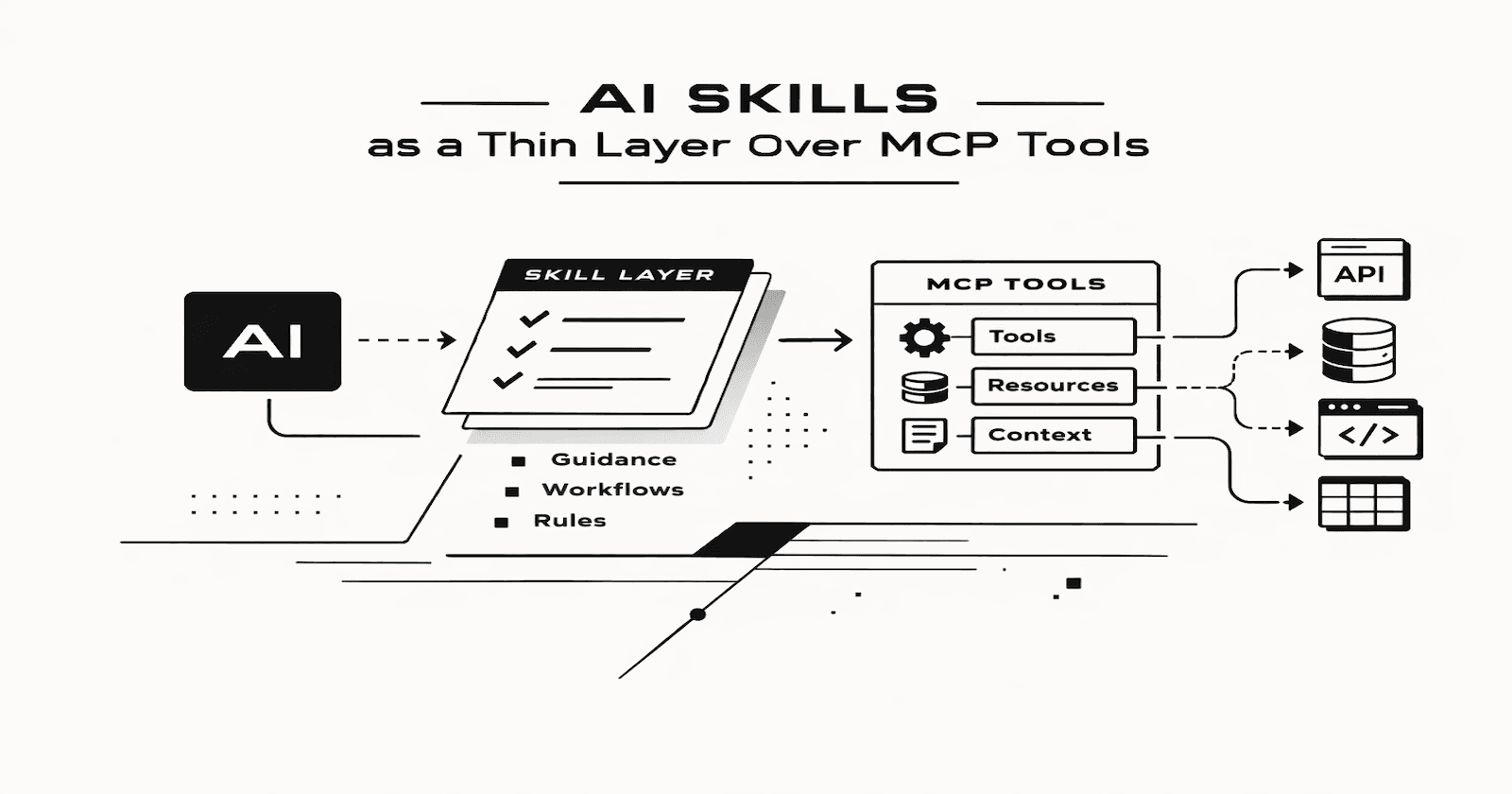

We need to stop thinking of APEX primarily as a rapid CRUD builder and start treating it as an Agent Control Plane. The infrastructure to build this supervisory UI already exists within the APEX ecosystem; it simply needs to be repurposed.

Here is how APEX architecture must adapt to an agent-driven model:

From Page Processes to Agent-Ready APIs: An agent cannot click a button to fire an APEX Page Process. Business logic must be rigorously decoupled from the UI. We need to expose strict, deterministic Oracle REST Data Services (ORDS) or self-contained PL/SQL packages. These become the literal "tools" the agent invokes to interact with the database.

Human-in-the-Loop via the Approvals Component: When an agent attempts a high-stakes action or encounters ambiguity, it should not fail silently. Instead, the agent's backend process can start an APEX workflow instance. The Unified Task List becomes the "Supervisory UI," where humans review the agent's proposed action, inspect its reasoning, and approve or reject the action.

Handling Asynchronous Agent State: Many AI agents operate asynchronously, often taking seconds or minutes to multi-step through a problem. Traditional APEX pages are synchronous. To bridge this gap, we can use APEX Background processes and APEX Automations to run agents in the background and use push notifications to send status updates to the client.

Auditability: In regulated environments, auditability requires more than a record of what changed. Future APEX apps will need dedicated agent log tables to capture the task, supporting evidence, tool invocations, confidence signals, performed validations, and a concise decision summary. That trace should surface alongside the business record in the APEX UI to establish trust and traceability.

Conclusion

If by “user interface” we mean page-heavy, form-heavy, navigation-heavy systems built around manual data entry and procedural interaction, then AI agents probably do mark the beginning of the end for that model in many cases.

But if by “user interface” we mean the layer where humans express intent, review actions, manage risk, resolve ambiguity, and stay in control, then no. The UI is not ending. It is being redefined.