Easy file Parsing with the APEX_DATA_PARSER API

Introduction

Almost all projects, whether custom APEX applications or full-blown ERP implementations, require you to import data from an external file. As Oracle developers, our peak productivity starts when we handle data using SQL. The quicker we can begin handling data using SQL, the faster development can be completed.

APEX_DATA_PARSER provides APIs to help us to:

- Analyze a file to understand its format and structure.

- Identify the field names and datatypes of the fields in the file.

- Use a SELECT statement to pull data from a file without developing any parsing logic.

APEX_DATA_PARSER supports the following file formats: CSV, JSON, Excel (XLSX), and XML

Before APEX_DATA_PARSER

Before APEX_DATA_PARSER, we have had to employ one of the following methods to get external file data into a table where we can process it using SQL:

- Use SQL*Loader to load data from a file into a staging table, then kick off a PL/SQL process to transform and load the data.

- Use UTL_FILE within PL/SQL to read data from a file on the file system.

- Use ‘External Table’ functionality within PL/SQL to read a file as if it were a table.

- Build a custom program using a language like Java to read data from the file, parse it and insert it into a table using JDBC.

All of these methods come with challenges, which include:

- None support parsing and loading JSON, Excel, or XML files.

- None of these methods easily handle the structure of your file changing.

- All these methods require you to copy the file to a specific location where the database can access it.

- SQL*Loader and External Tables require you manually build a definition file to define the structure of the file you are loading.

- UTL_FILE and External Tables require that you configure database directories to define the location of the external file on the file system.

- UTL_FILE requires that you manually build the parsing logic to split records into fields etc.

With APEX_DATA_PARSER

APEX_DATA_PARSER handles all of the challenges I described above (and more). It contains a series of APIs that allow you to discover the structure of a file and then parse the data in the file directly from a SQL statement.

For APEX_DATA_PARSER to parse your file, it must be in a BLOB format. You might be thinking if I have to get the file into a BLOB, then this isn’t solving the problem of having to move the file to a location that the database is aware of. Enter APEX_WEB_SERVICE. You can use APEX_WEB_SERVICE to first get the file BLOB from somewhere like OCI Object Storage and then consume the response from APEX_WEB_SERVICE using APEX_DATA_PARSER e.g.

SELECT line_number,col001,col002,col003,col004,col005,col006,col007,col008

FROM TABLE(APEX_DATA_PARSER.PARSE

(p_content => APEX_WEB_SERVICE.MAKE_REST_REQUEST_B

(p_url => 'https://objectstorage.us-ashburn-1.oraclecloud.com/n/nueva/b/nueva-public/o/MiscUploads%2FNOAA_Locations.xlsx',

p_http_method => 'GET'),

p_skip_rows => 1,

p_file_name => 'test.xlsx'));

Discovery

One of the most exciting features for me is the ability to analyze a file and generate a JSON document containing the metadata for the file.

SELECT APEX_DATA_PARSER.DISCOVER (

p_content => APEX_WEB_SERVICE.MAKE_REST_REQUEST_B

(p_url => 'https://objectstorage.us-ashburn-1.oraclecloud.com/n/nueva/b/nueva-public/o/MiscUploads%2FNOAA_Locations.xlsx',

p_http_method => 'GET'),

p_file_name=>'test.xlsx' ) as profile_json

FROM dual;

APEX_DATA_PARSER.DISCOVER returns a JSON document with details of the file encoding, delimiters, field names, and data types for each file's columns. Here is an excerpt from the above SQL:

{

"file-type":1

,"file-encoding":"AL32UTF8"

,"headings-in-first-row":true

,"xslx-worksheet":"sheet1.xml"

,"csv-enclosed":"\""

,"force-trim-whitespace":true

,"columns":[

{

"name":"ID"

,"data-type":2

,"is-json":false

}

,{

"name":"NAME"

,"data-type":1

,"data-type-len":255

,"is-json":false

}

...

This information is beneficial. For example, you can use it to write code to handle changes to the number of columns in uploaded files. Today your user uploads a file with ten columns; tomorrow, they add a column. Inspecting the format of the file allows you to handle that 11th column dynamically. This is something you could never do with previous Oracle file loader tools.

To make things even easier, there is a companion API to APEX_DATA_PARSER.DISCOVER called APEX_DATA_PARSER.GET_COLUMNS.

SELECT *

FROM TABLE(APEX_DATA_PARSER.GET_COLUMNS(

APEX_DATA_PARSER.DISCOVER(

p_content => APEX_WEB_SERVICE.MAKE_REST_REQUEST_B

(p_url => 'https://objectstorage.us-ashburn-1.oraclecloud.com/n/nueva/b/nueva-public/o/MiscUploads%2FNOAA_Locations.xlsx',

p_http_method => 'GET'),

p_file_name => 'test.xlsx' )));

APEX_DATA_PARSER.GET_COLUMNS produces a PL/SQL table of the columns from the JSON profile.

Parsing the File

Now that we know what our file looks like, we can use APEX_DATA_PARSER.PARSE to parse and process the data within the file using SQL.

SELECT line_number,col001,col002,col003,col004,col005,col006,col007,col008

FROM TABLE(APEX_DATA_PARSER.PARSE

(p_content => APEX_WEB_SERVICE.MAKE_REST_REQUEST_B

(p_url => 'https://objectstorage.us-ashburn-1.oraclecloud.com/n/nueva/b/nueva-public/o/MiscUploads%2FNOAA_Locations.xlsx',

p_http_method => 'GET'),

p_skip_rows => 1,

p_file_name => 'test.xlsx'));

APEX_DATA_PARSER.PARSE returns a generic table array with 301 columns. The line_number from the file and up to 300 columns of data from the file. File parsing happens on-the-fly as this function is invoked. Data does not write to a collection or a temporary table.

Although the columns names are generic (col001, col002, etc.), we know exactly what is in each column because we have the profile generated earlier. At every turn, APEX_DATA_PARSER is making it easier for you to deal with information about the file and the file itself using SQL.

Considerations

There are a few considerations that are worth noting.

‘Flat’ File Structure

Although it supports JSON and XML file types, APEX_DATA_PARSER can only handle data in a flat structure. You cannot, therefore, parse data from nested objects in JSON or XML. During the discovery of JSON files, APEX_DATA_PARSER will try and determine the primary array and parse the top level of that array only. You can also provide a ‘selector’ to choose which flat structure from the file you want.

Basic DATE Type Identification

APEX_DATA_PARSER seems to rely on clues in the formatting of date fields to identify the format string. For example, DD/MM/YYYY is not recognized as a DATE, while DD.MM.YYYY and MM/DD/YYYY are. It would seem that if it tried a few different approaches to determining if a field is a date and then test these format strings against the actual data, it could improve its success rate in identifying fields as dates.

Performance

APEX_DATA_PARSER uses underlying core database functionality to do the parsing where it can. For example, if you are on 11g of the database, it uses APEX_JSON to parse JSON. If you are on 19c or higher, it will use the native JSON parser. The native JSON parser is orders of magnitude faster than APEX_JSON. In view of this, the parsing speed can vary dramatically depending on the version of the DB you are on. This is very clever. By encapsulating file parsing logic within APEX_DATA_PARSER, you will automatically gain performance improvements when you upgrade from 11g to 19c, etc.

Conclusion

For on-premise and cloud-based ERP projects, a significant portion of the technology budget is spent converting data (e.g., items, customers, suppliers, orders, invoices, etc.) from a legacy system to a new Oracle ERP. APEX_DATA_PARSER can significantly reduce the cost and complexity of these conversions by removing the complexity of getting you to the stage where you can work with the file data in SQL.

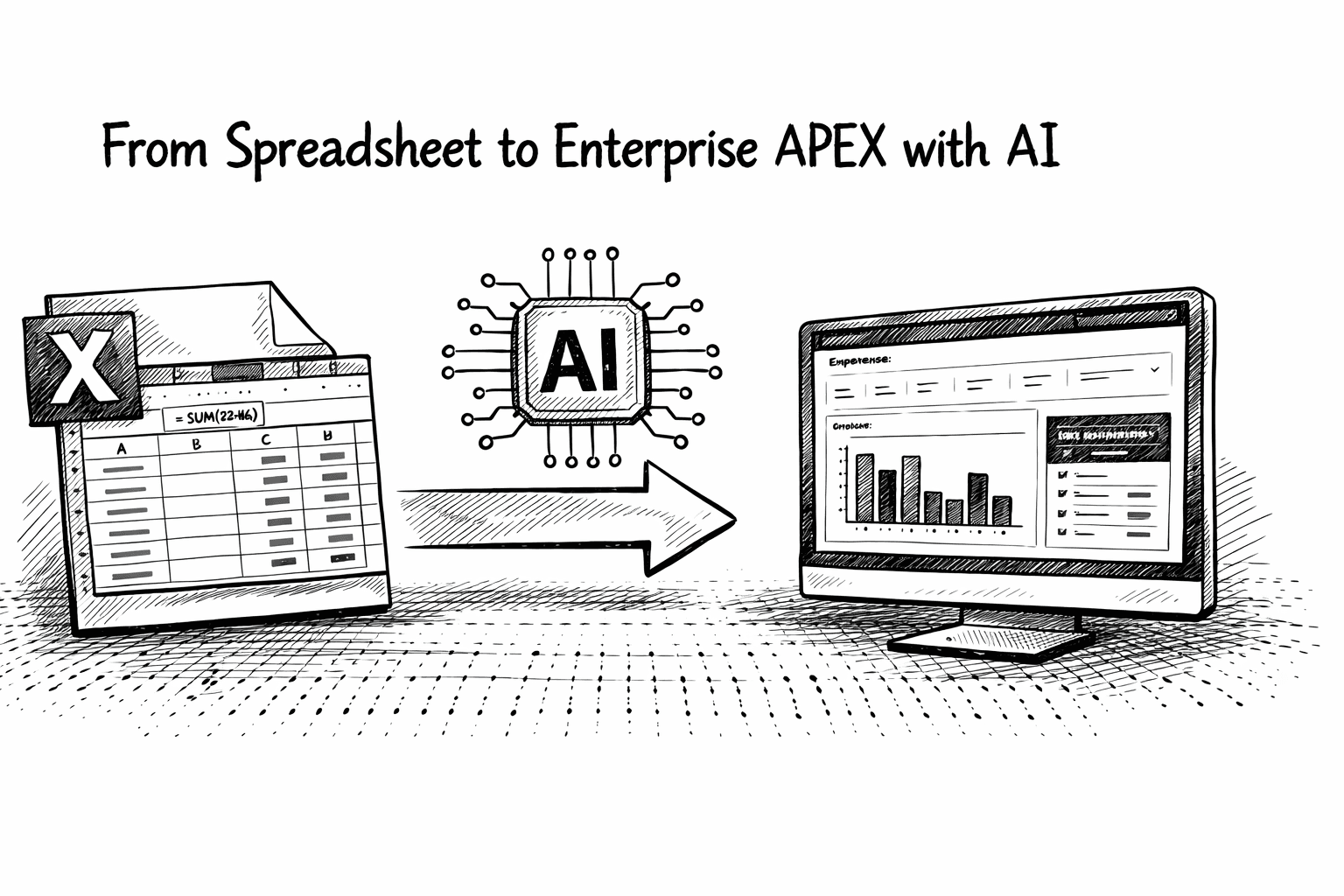

There are many more examples where APEX_DATA_PARSER can save you time and allow you to provide real business benefits. For example, you can save users from manual data entry by enabling them to upload data from an Excel spreadsheet. APEX_DATA_PARSER even handles fetching data from a specific tab of a spreadsheet.