Avoiding the Vibe Coding Rabbit Hole

Introduction

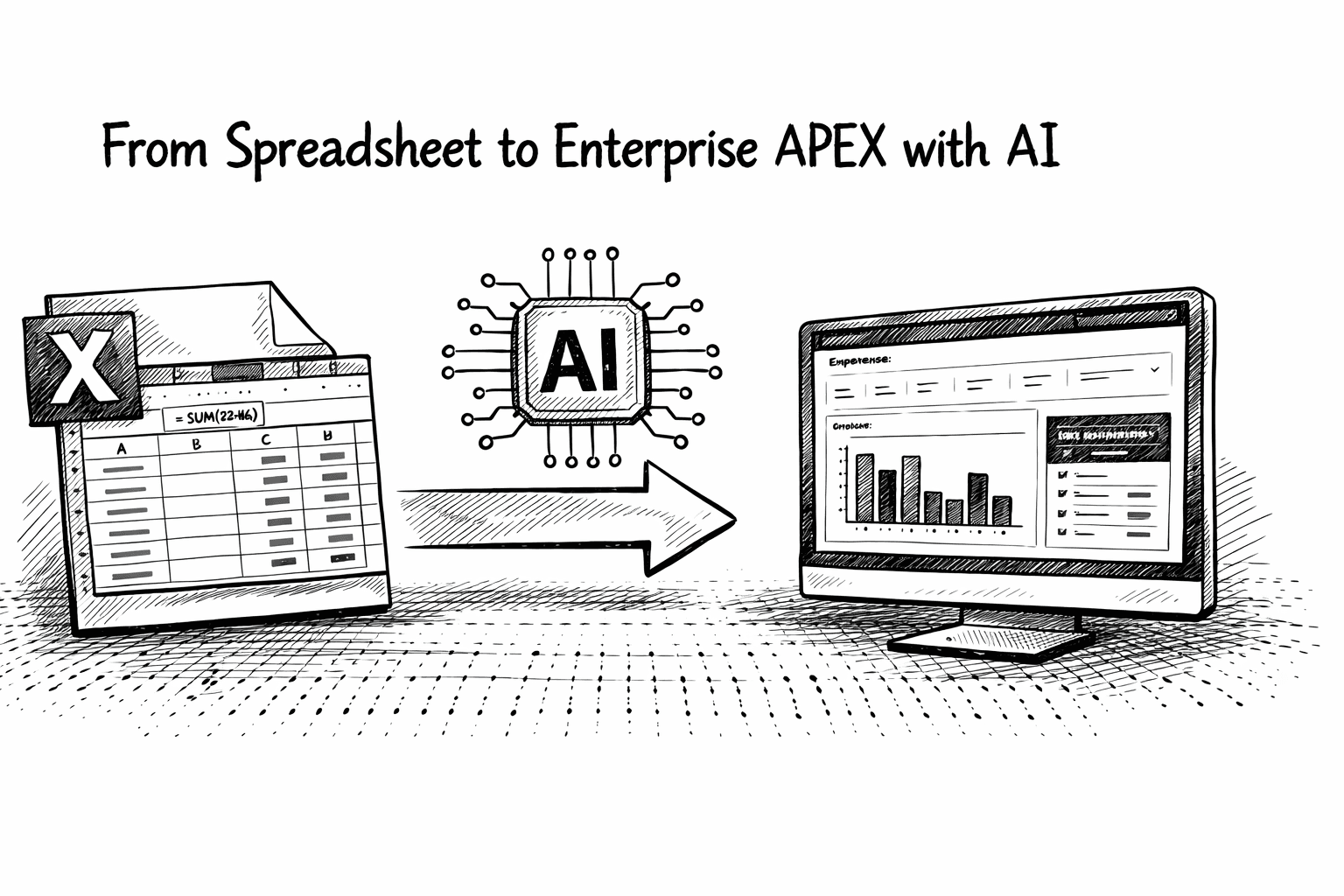

A few weeks ago, I started building an APEX 2nd brain to practice Agentic AI in APEX and PL/SQL, and hopefully create a useful tool to supplement my aging brain.

I am writing this post while Codex is rebuilding my APEX 2nd brain Application from scratch. This post is a cautionary tale about what happens when you go down the vibe coding rabbit hole.

The Rabbit Hole

The first version of my 2nd brain APEX App included a simple text box on a single APEX page. When the user clicks the submit button, I pass a predefined prompt that provides instructions for filing the entry, along with the entry itself, to an LLM for classification. There was also an APEX Automation, which ingested my personal and work emails and calendar entries from Gmail and MS Office.

It worked OK, but I soon found the features limiting (especially with all the news about what people we are achieving using Open Claw). For the record, I do not use Open Claw.

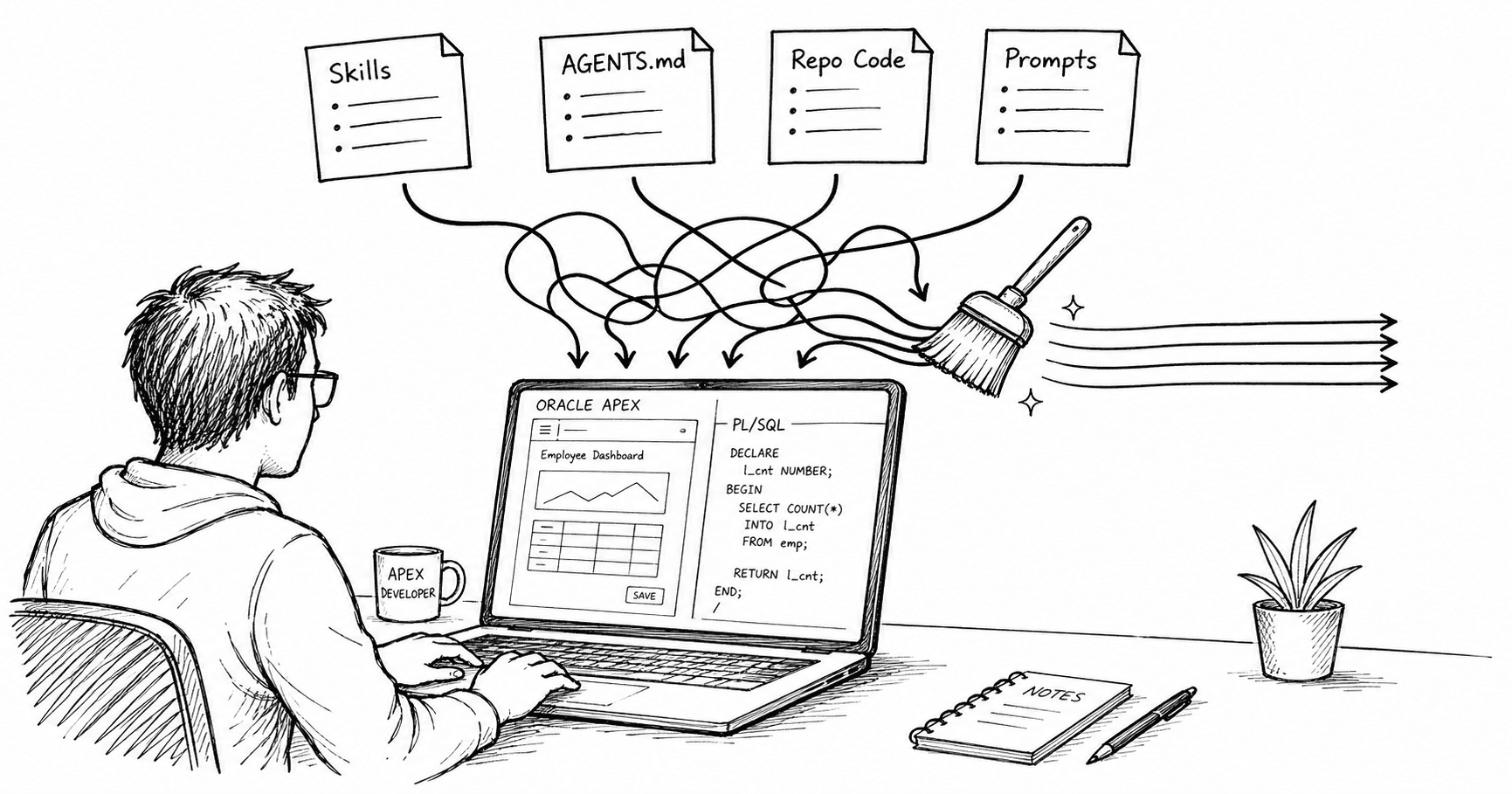

With the new Codex App and a prompt first strategy, I started making enhancements. It was going great… I would ask for feature after feature, and they would get built and work 90% of the time. After a couple of hours, I stepped back and looked at the actual code that had been written:

I ended up with 800 lines of JavaScript in the main APEX page (that’s more JavaScript than I write in a year).

Instead of using my AI config tables, which store system prompts, tool calls, etc., the AI wrote the system prompts and tool calls JSON and hard-coded them into the code.

The quality of the data model had degraded over time as fields were added, removed, and repurposed, and tables were abandoned. Each new table or set of tables was very well thought out, but there was no consideration for tidying up the old tables.

The AI was overly cautious about dropping old code. By the end, I was left with several views and packages that were no longer used. This amounted to more than 2,000 lines of unused code.

The other side effect was that the overall architecture had drifted and become overly complex and bloated. The issue isn’t the AI; it’s unbounded iteration without thought.

Essentially, it was the work of a competent and overly eager junior programmer. There were no egregious issues (other than not cleaning up old code), but it was not the way I would have done it.

Stepping Back & Resetting

Write a Specification

I decided to take a step back and reassess what I actually wanted, and spent an hour writing a detailed specification. You can read the specification here.

This produced two benefits:

It forced me to think about what features were important to me.

It provided the AI with much clearer guidance on what it was supposed to do. Instead of 10 disjointed prompts with feature requests, it had a single specification on which to build a solid architecture.

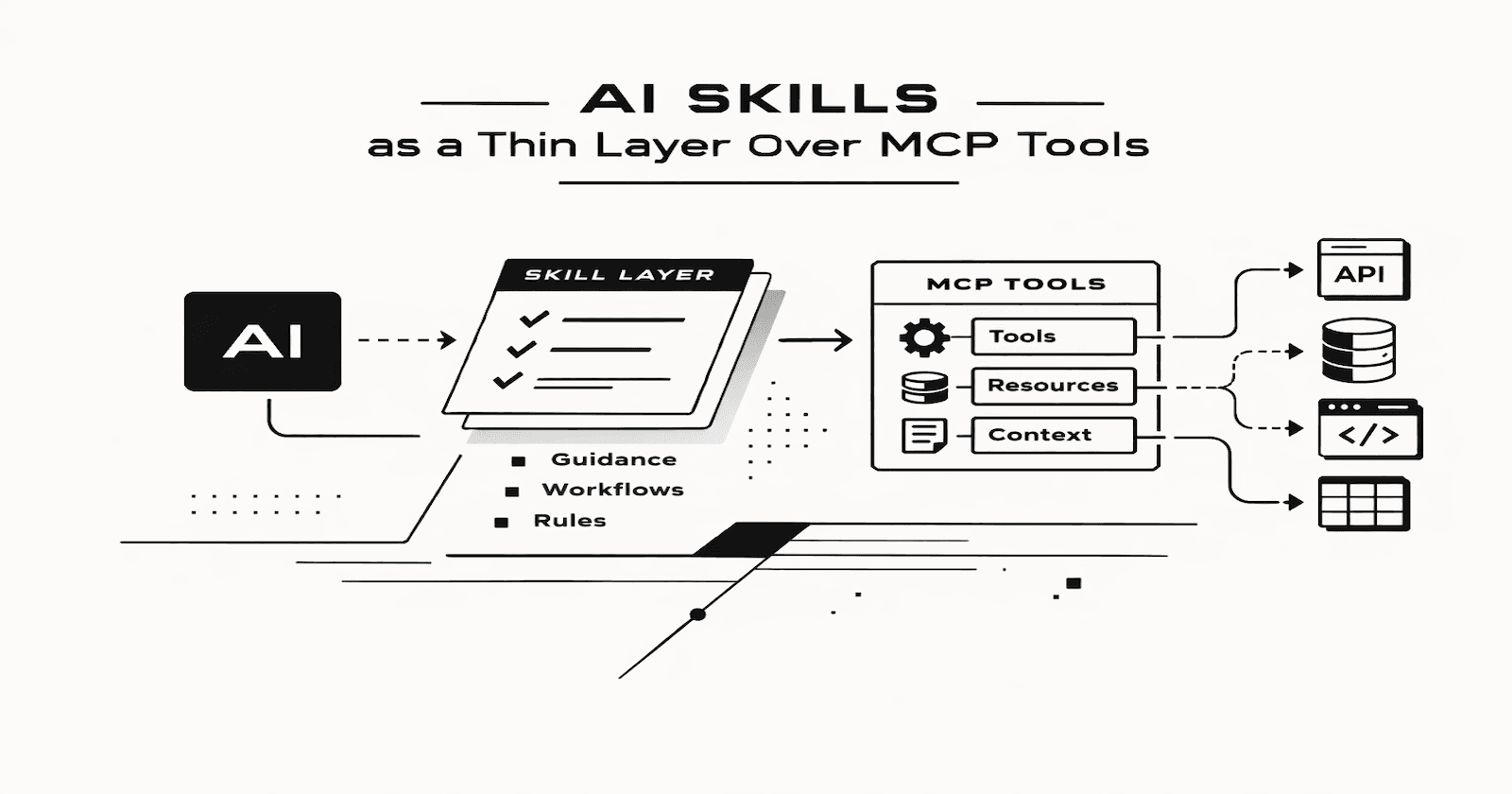

AGENTS.md

I also updated my AGENTS.md to include some additional instructions:

Prefer PL/SQL over JavaScript. When JavaScript is necessary, prefer Dynamic Actions over Ajax Callbacks.

Utilize AI Configuration tables gen_ai* to specify new prompts and tools.

Tell me if I suggest changes that contravene APEX and PL/SQL best practices.

AGENTS.md files littering your file system, it is important that you actively keep them up to date with the latest constraints and guardrails.Lessons Learned

Always write a spec first. However simple it is, writing it out first helps you organize your thoughts and provides valuable guidance and structure for the AI.

LLMs ❤️ JavaScript more than PL/SQL. Unless you instruct them otherwise, they will generate far too much unnecessary JavaScript. They also love Ajax Callbacks; it’s not that they don’t know about Dynamic Actions, they just prefer Ajax Callbacks.

AGENTS.md is a live document; every time the LLM does something you don’t like, tell it by updating AGENTS.md.

After implementing a new feature, always follow up with a prompt to have the LLM check for unused code. More importantly, ask it to follow the entry points to your app and suggest entire branches of unused code. Also, run a check for data model drift. Codex is very good at doing this; it just needs to be told to do it.

When using plan mode in Codex or Claude (which I highly recommend), read the plan! This may sound obvious, but when I started out, I would just skim the plan and hit Go. Providing input after the plan is produced is often the last chance to direct the LLM once implementation begins. Adjustments made at this stage can save you hours later on.

If you start a thread with an LLM and get to around 5 turns, press pause ⏸️ to think. Ask yourself whether you are on the edge of the AI 🐇 🕳️❓ Ask yourself: Will the next prompt really get me there, or should I start again with a better spec? The answer is usually the second one, but it is hard to step back!

Time for Controversy

With the major improvements made to coding in Claude Opus 4.5/4.6 and Codex 5.2/5.3, I have been asking myself whether I really need to ‘know’ all the code I create. If AI generated it, then surely AI will be better at maintaining it than I am?

My answer (at least for now) is that I do need to understand the code I / the AI creates.

I am responsible for the code, not the AI. It will be a dark day indeed when developers stop being responsible for the code they produce.

I still feel that my taste/instincts/intuition are better than the AIs. This is the main advantage humans have over humans (at least right now); we should make the most of it.

We are not close to finding all of the edge cases. Even for this personal project, I added three edge cases to my AGENTS.md and went back to my Spec a few times to guide the AI. We are still a long way off from a fire-and-forget approach to AI development.

Conclusion

Vibe coding is a superpower; right up until it quietly turns into your architecture.

The problem wasn’t Codex. It was me letting a long thread become the design process. The AI will happily keep shipping “reasonable” changes forever, but it has no instinct for simplicity, no taste, and no discomfort about leaving dead code and abandoned tables behind.

The fix also wasn’t “use less AI.” It was put the AI back inside guardrails: a written spec, a clear APEX-first strategy (Dynamic Actions over callbacks, PL/SQL over page-level JavaScript), and an AGENTS.md that I actually maintain. Once those constraints are in place, AI is great, not just at building features but at tracing entry points, finding dead branches, and calling out drift. It just needs to be told to do it.

And on the “do I need to know the code?” question: for now, yes. Maybe I don’t need to know every generated line, but I absolutely need to own the architecture, the data model, and the edge cases, because the day something breaks (or leaks), it’s my name on it, not the model’s.