How I Use AI to Make Me a Better APEX Developer

Introduction

I have been using AI to help me build APEX Apps for more than a year, and the pace of change during that time has been amazing. In this post I will review the tools and workflows that that have helped me to improve as an APEX Developer.

I won’t be getting into how to set up these tools, just how I use them.

AI Subscriptions

I currently have the following AI Subscriptions:

Open AI Chat GPT Business ($300/year)

- I use this subscription for ChatGPT, APIs, and Codex

GitHub Co-Pilot Pro ($100/year)

AI Tools

These are the AI tools I am using today:

| Tool | Usage |

| GitHub Copilot in VS Code | Ghost Text / Autocomplete, Changes to PL/SQL code in the IDE. |

| Codex Plugin in VS Code | Agent mode changes made from VS Code. Working with SQLcl MCP Server |

| Codex CLI | Tasks I can let run in the background, e.g., Code Reviews, Generating Repository Documentation, Creating Draft Data Models, Refactoring Code, etc. |

| GitHub Copilot in GitHub Desktop | Auto-Generate Commit Message |

| Oracle SQLcl MCP Server | Talking to the Database. Tuning SQL, Understanding Data Models, etc. |

Auto-Complete / Ghost Text

I estimate that Ghost Text accounts for about 50% of my overall productivity gains with AI. This is the feature where the AI predicts your next step based on the surrounding code and provides a suggestion you can accept with the Tab key. It is especially useful for repetitive tasks, such as:

Writing

MERGEandINSERT INTOstatements. The AI leverages the schema and table structure currently open in the IDE (e.g., the columns and data types) to accurately auto-complete the statement after just a few initial lines.Automatically adding comments and apex_debug entries.

Autocompleting the

EXCEPTION WHEN OTHERSblock of a procedure or function.Applying a required change across multiple related code blocks.

Here is a short video showing me creating a new procedure.

Code Reviews

I use AI to do three different kinds of code reviews (of my own work):

End of Day Review of all changes made during the day

Ad-Hoc review of a PL/SQL package

Full Code Review

End of Day Review

At the end of every day, I like to run an automated code review of the changes I made that day. The Codex CLI makes this easy with the /review command.

Change to the root folder of the GitHub repository and start Codex, then type /review

Then choose what kind of review you want to do; option 2 is what I do.

Codex will then compare the last committed version with the changes and perform a review.

Ad-Hoc Review (PL/SQL Package)

Every so often, I like to do a complete review of a package. I use the Codex CLI for this so that I can run it in the background. The key here is to develop a prompt that reviews the code the way you would like it reviewed.

Here is the prompt I currently use:

# Code Review Objective

Perform a code review for the following files:

- `PLSQL/XXFN_RFQ_UTL_PKB.sql`

- `PLSQL/XXFN_RFQ_UTL_PKS.sql`

Begin with a concise checklist (3-7 bullets) of the sub-tasks required to complete this review; keep items conceptual, not implementation-level.

# Tasks

Review each file for:

- Logic errors

- Unused variables

- Lack of code reuse

- Security Concerns

- Poorly performing code

For each identified issue, state your assumptions and verify the potential impact before assigning severity.

# Output Instructions

- Document all findings in `RFQ_CODE_REVIEW.md`.

- Use a Markdown table with the columns:

- **File**

- **Line(s)**

- **Issue Type**

- **Description**

- **Severity** (Low/Medium/High)

- **Suggested Fix**

- Group issues by file and list findings sequentially within each file.

- If a file has no issues, include a row stating "No issues found" in the **Description** column, leaving the other columns blank for that row.

- Assign **Severity** based on the potential impact of the issue; if uncertain about severity or affected lines, specify your assumption and reasoning.

- For each finding, specify the affected line(s) precisely. For multi-line or file-wide issues, indicate the corresponding range or "entire file" as needed.

After reviewing and documenting, validate that all findings are clearly described and all required fields are filled in; if any instructions are ambiguous or criteria unmet, highlight them at the end of the output for clarification.

Complete Code Review

I run a code review of the complete codebase about once a week. Once again, I turn to the Codex CLI to run this so I can work on something else while it runs.

Here is the prompt I currently use:

You are acting as a senior Oracle APEX / PL/SQL architect and code reviewer.

Context:

- The repository contains one or more Oracle APEX applications, shared components, PL/SQL packages, functions, procedures, triggers, views, and other database objects.

- Assume it is used in a production or near-production environment.

- Focus heavily on correctness, security, performance, maintainability, and APEX best practices.

Your task:

Perform a **comprehensive code review** of the entire repo (APEX export files, PL/SQL packages, views, triggers, utility scripts, etc.) and then produce a **single Markdown report** as your only output.

General review focus:

1. **Correctness & robustness**

- Logic errors, edge cases, null/empty handling, and error handling.

- Transaction handling and commit/rollback discipline.

- Concurrency issues (locks, race conditions, serialization).

2. **Security**

- SQL injection risks (dynamic SQL, concatenated where clauses, using NVL with parameters, etc.).

- XSS / output escaping issues in APEX (unescaped substitutions, htp.p/owa_util.showpage usage, missing server-side validation).

- Session and authorization handling (APEX authorization schemes, access control logic).

- Sensitive data handling (logging PII, passwords, tokens, or secrets).

- Use of APEX substitution strings, bind variables, and item values in queries and PL/SQL.

3. **Performance & scalability**

- N+1 query patterns, repeated queries in loops.

- Missing or misused indexes, non-selective predicates, full table scans where dangerous.

- Inefficient PL/SQL patterns (unnecessary row-by-row processing, missing BULK COLLECT / FORALL where appropriate).

- Heavy computations in views vs. materialized views or caching strategies.

- APEX-specific performance concerns (expensive queries in report regions, interactive report/grid filters, LOVs with slow queries).

4. **APEX application design**

- Page process and branch logic clarity.

- Proper use of shared components (lists, LOVs, templates, authorization schemes).

- Hard-coded values vs. configuration tables/application items.

- Use of APEX APIs (APEX_APPLICATION, APEX_UTIL, APEX_PAGE, APEX_SESSION, APEX_DEBUG, etc.) and any risky or deprecated calls.

5. **Code quality & maintainability**

- Naming conventions for packages, procedures, variables, constants, and views.

- Comment quality and accuracy (misleading or outdated comments).

- Module boundaries and cohesion (what should be refactored into separate packages).

- Reusability and DRY violations (duplicate logic that should be centralized).

- Error logging and instrumentation (APEX debug, custom logging tables, structured error messages).

6. **Database design & views**

- View complexity and readability.

- Security of views (exposing too much data, missing filters, relying on client-side filters).

- Use of synonyms, grants, and schema separation.

Output requirements:

- Only output a single Markdown document called CodeReview.md

- No explanation or actions outside of the Markdown.

- Structure the report so that issues are easy to scan and the related code is easy to find.

Markdown structure:

1. # APEX Repo Code Review

- Short overview of the overall health of the codebase.

- 2–3 key strengths.

- 3–5 top-priority concerns.

2. ## Summary by Severity

- A bullet list showing counts:

- `Severe issues: N`

- `Moderate issues: N`

- `Low issues: N`

3. ## Detailed Findings

- Group by logical area, for example:

- `### PL/SQL Packages`

- `### APEX Pages & Processes`

- `### Views & SQL`

- `### Security`

- `### Performance`

- Under each area, list issues as subsections:

Example structure for each issue:

#### [Severity] Short issue title

- **Severity:** Severe | Moderate | Low

- **Location:** `path/to/file` (and object name, page number, or line range if available)

- **Description:**

- Clear explanation of what is wrong and why it matters.

- **Impact:**

- Security / correctness / performance / maintainability impact in 1–3 lines.

- **Recommendation:**

- Specific and actionable guidance on how to fix it.

- **Code snippet (before):**

- Show only the relevant lines, minimal but sufficient context

- **Suggested fix (after or pseudo-code):**

- Show improved version or a clear pseudo-code template

4. ## Pattern-Level Recommendations

- Describe any recurring patterns that should be globally fixed (e.g., unsafe dynamic SQL patterns, repeated logic for auditing, repeated branching logic in APEX).

- Provide 2 to 5 concrete "refactoring themes" that would significantly improve the codebase.

5. ## APEX-Specific Recommendations

- Suggestions for:

- Better use of shared components.

- Improved authorization and authentication handling.

- Performance tuning for heavy pages, reports, and LOVs.

- Hardening against XSS and misuse of substitution strings.

6. ## Quick-Win Checklist

- A concise bullet list of the most important fixes (in priority order) that the team should implement next.

Severity rubric:

Use the following rubric consistently:

- **Severe:**

- Can cause data corruption, security vulnerabilities, major logical errors, or severe performance degradation in realistic conditions.

- **Moderate:**

- Risky or inefficient patterns that can cause noticeable issues under load or over time, but not immediately catastrophic.

- **Low:**

- Style, readability, minor performance improvements, or best-practice alignment that helps maintainability but is not urgent.

Additional rules:

- Prefer **precision over volume**: do not list 100 trivial issues; focus on the most impactful ones, while still giving enough detail to be useful.

- When in doubt, **show code**: include short but focused code snippets in fenced blocks so developers can quickly locate and fix the problem.

- If something looks dangerous but you are not fully sure (based on the visible context), call it out as a **potential issue** and clearly say what assumptions you are making.

Now, perform this review and output only the Markdown report described above.

Oracle SQLcl MCP Server

Please see this post for more on how I use the Oracle SQLcl MCP Server.

Writing New Code

You will have noticed that, so far, I have not discussed writing brand-new code based solely on a prompt. This is where I start to get nervous about AI.

Lately, I have started having AI write individual PL/SQL functions and procedures. I have noticed a significant improvement here with the latest models (OpenAI 5.1). I see even better results when I am adding procedures and functions to existing packages. I am sure this is because the additional context helps AI write better code.

This, along with writing SQL statements (using the MCP server), is the sweet spot when it comes to generating new code with AI.

What About APEX

At the moment (before the release of APEX 25.2, 26.1), AI is quite capable of reviewing and providing feedback on APEX Apps. This can be done via SQL using the SQLcl MCP server (and the right prompts), or based on AI extracting information from an APEX export file.

When I run the code reviews (mentioned above), AI checks the exported APEX Apps, and I get just as many findings from APEX as from PL/SQL.

I find AI especially useful for:

Checking APEX security settings (SSP, Authorization Schemes Applied to Buttons, etc.).

Building custom UI Components (e.g., template components).

APEX AI Wizard

APEX 24.2 does have a Wizard that allows you to create basic Apps from a prompt (and or a spreadsheet). This is OK for one-off Apps, but I don’t think it is the long-term answer for building APEX Apps with AI (nor does Oracle).

APEXlang

APEXlang will be the future of building APEX Apps with AI. It is not out yet, but I saw a demo at this year’s Oracle AI World, and it looks very promising. Having a formal syntax (that an AI can learn) will unlock the ability to build brand new Apps and perform major refactors on your APEX Apps.

AI Controlled Browsers

When ChatGPT Atlas was released, I immediately logged into my https://oracleapex.com/ords instance and asked AI to write an App to track the service history for my car. I was pretty impressed, it used QuickSQL to generate a data model and then built a fully functional App (albeit relatively simple).

I think the future of AI-controlled browsers and APEX lies in automated testing. You can turn your test scripts into prompts and have the browser run them. Google’s AntiGravity IDE takes this a step further and can capture screenshots of the testing along the way.

How do I?

The final use of AI for me is asking ad hoc questions. I work for myself, so I don’t have colleagues to ask questions of around the watercooler.

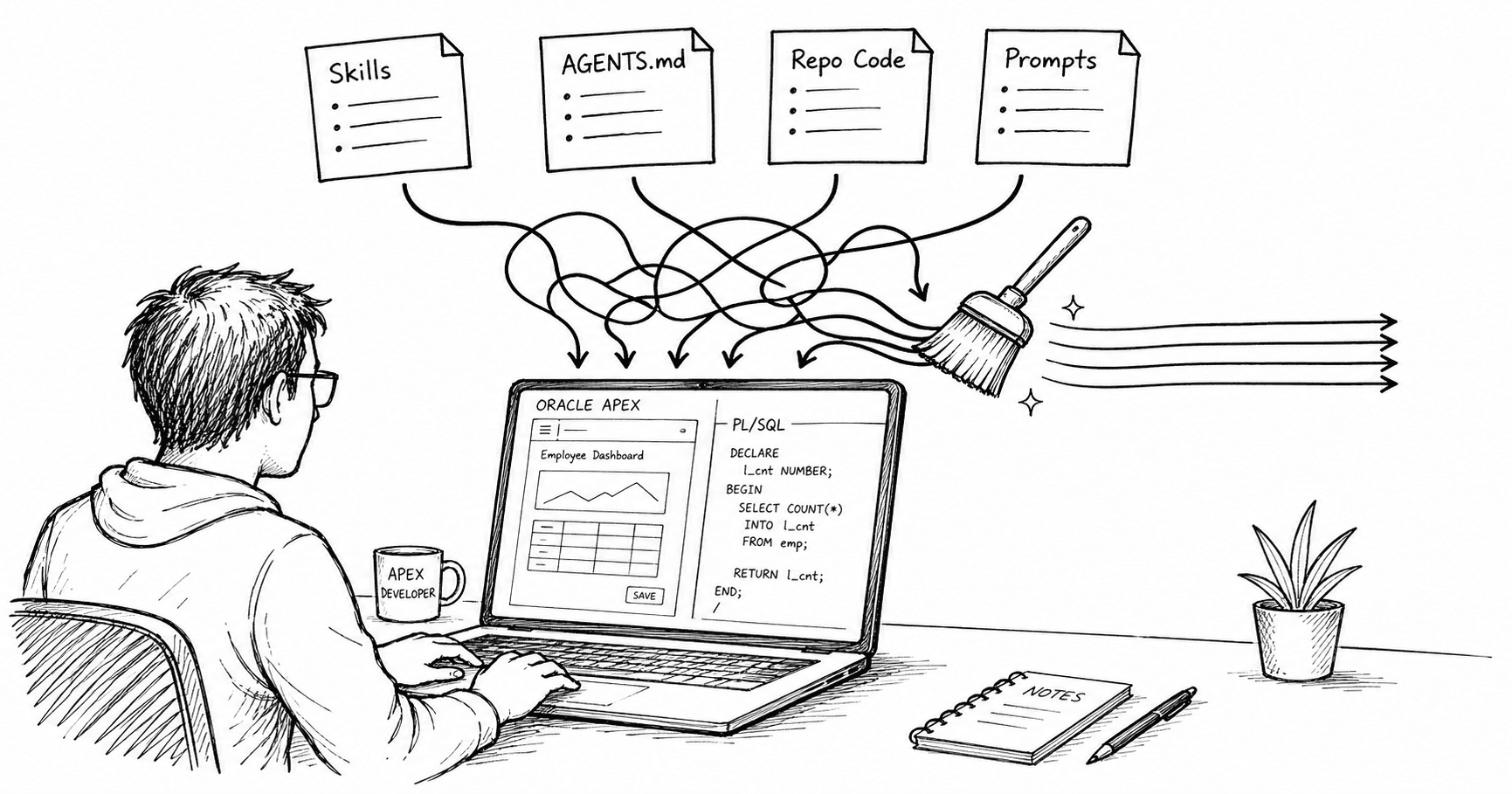

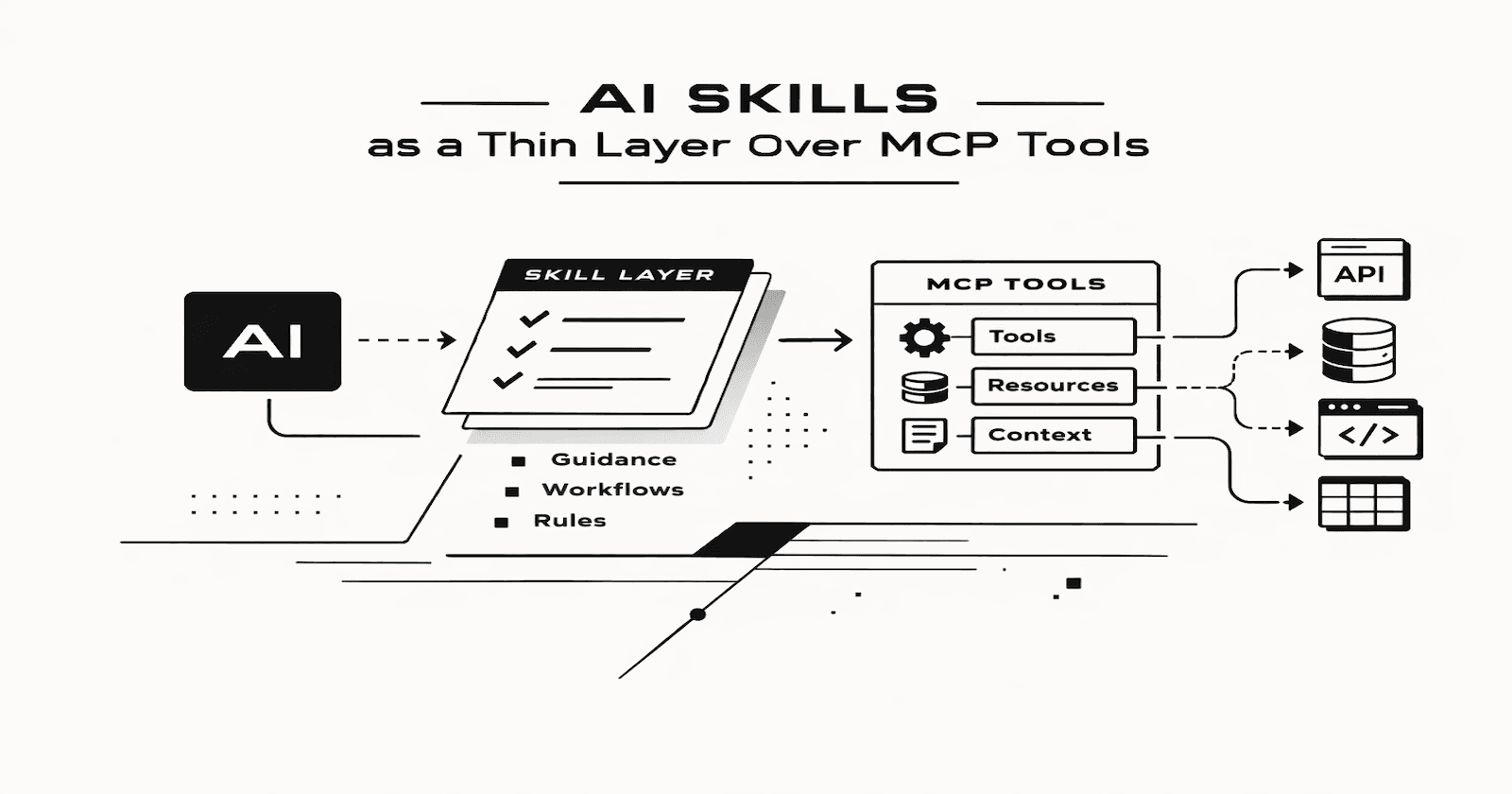

AGENTS.MD

I encourage you to spend an afternoon (or two) developing a robust AGENTS.md file for each of your code repositories. AGENTS.md gives you the ability to tell the AI what your naming conventions are, how you like to structure SQL statements, and general guidelines for how you want it to behave when generating code. Without it, it relies on your existing code base, and you will often end up spending as much time formatting your code as the time the AI saves you to start with.

I am not sure if AGENTS.md will become the de facto standard, but it is a better alternative than having AI coding agents use their own files to instruct the AI (.github/copilot-instructions.md, cursor.json, etc).

Warning!

There are a few things that I think you must keep in mind when using AI to help you code:

AI is a Tool, not a Crutch - There is no substitute for knowing what the code is supposed to look like. We all write the odd JavaScript snippet using AI without really understanding the output, but this should not be the default. If you are building code and getting paid for it, you'd better understand what it does!

Never Leave Security Entirely Up to AI - I mentioned above that AI does a good job of checking security settings in APEX. Even though it does, I always manually check security settings at the end of a project (using my own SQL statements or tools like APEX-SERT, ApexSec, or APEX Project Eye).

AI is Making me Dumber - There is no doubt in my mind that AI is making me dumber. When you rely on any tool for a period of time, your body adapts. When you buy a snow blower, the muscles you had from shoveling snow atrophy. The same can be said of AI. Does it really matter as long as you are getting the job done? It wasn’t a big deal when we adopted the calculator over mental math, but this may be different. Only time will tell.

AI Overload/Fatigue

Phillipp Hartenfeller recently posted the below comment in a LinkedIn thread, which really struck a chord with me.

I realized that I, too, am about 20% more efficient with AI than without. This productivity improvement, however, is both a blessing and a curse. I am now able to work on more projects at the same time, which means dealing with more people, more project constraints, more meetings, etc. There is also increased context switching (not just between projects, but also between my coding and checking the code that the AI is generating for me). This leaves aside the time it takes to learn and keep up with the latest AI developments.

Conclusion

Much is being said about the hype surrounding AI and the AI bubble. For developers, however, I believe the promise/threat (depending on which way you look at it) of AI is real. I see AI as an adapt or die situation for programmers. Having said that, we are still IT professionals, and it is incumbent on us to use AI professionally.

In a few months, most of this article will be out of date (not least because APEX 26.1 will likely have been released along with APEXlang).