Oracle SQLcl MCP Server with Codex & Copilot #JoelKallmanDay

Introduction

I have been using GitHub Copilot with Oracle’s SQLcl MCP server since its release in July 2025. The combination of Generative AI and databases is a powerful pairing that can help APEX developers build better products in less time. This statement comes with some caveats, which I will cover in this post.

In this post, I will describe how to set up the SQLcl MCP server in SQL Developer for VS Code, utilizing both OpenAI’s Codex and GitHub Copilot. I will also review several use cases and provide example prompts, which will help you get more out of this technology.

Configuring the SQLcl MCP Server

First, you will need to install the latest version of the SQL Developer Extension for VS Code and the latest version of SQLcl on your machine. You will also need to know the path to your SQLcl install.

In the following sections, I will describe how to set up Codex and GitHub Copilot to utilize the SQLcl MCP Server. Which tool to use is up to you. I currently use both, but am leaning toward Codex as my go-to development assistant.

Configuring GitHub Copilot

See the ‘Appendix 1 - Configure & Test GitHub Copilot with SQLcl MCP Server’ for details on how to set up and test the SQLcl MCP Server with VS Code.

Configuring OpenAI Codex

Install the OpenAI Codex Extension for VS Code

- Install the Codex Extension for VS Code.

Log in to your OpenAI / ChatGPT account

- Open the Codex extension > Click the settings gear icon > Log in

Configure Codex to use the SQLcl MCP Server

- Open the Codex extension > Click ⚙️ > MCP Settings > Open config.toml

- Once the file opens, copy and paste the below text into it (adjust your SQLcl path accordingly).

[mcp_servers.sqlcl]

command = "/opt/homebrew/Caskroom/sqlcl/25.3.0.274.1210/sqlcl/bin/sql"

args = ["-mcp"]

startup_timeout_ms = 60000

Testing the Setup

For the rest of this post, I will be using a saved connection called ‘DEMO’ in SQLDeveloper for VS Code:

Test from Codex with VS Code

Open the Codex Extension and type the following in the chat Window:

Once connected, ask:

You should end up with something like this:

Test from the Codex CLI

The SQLcl MCP Server also works with the OpenAI Codex CLI.

How it Works

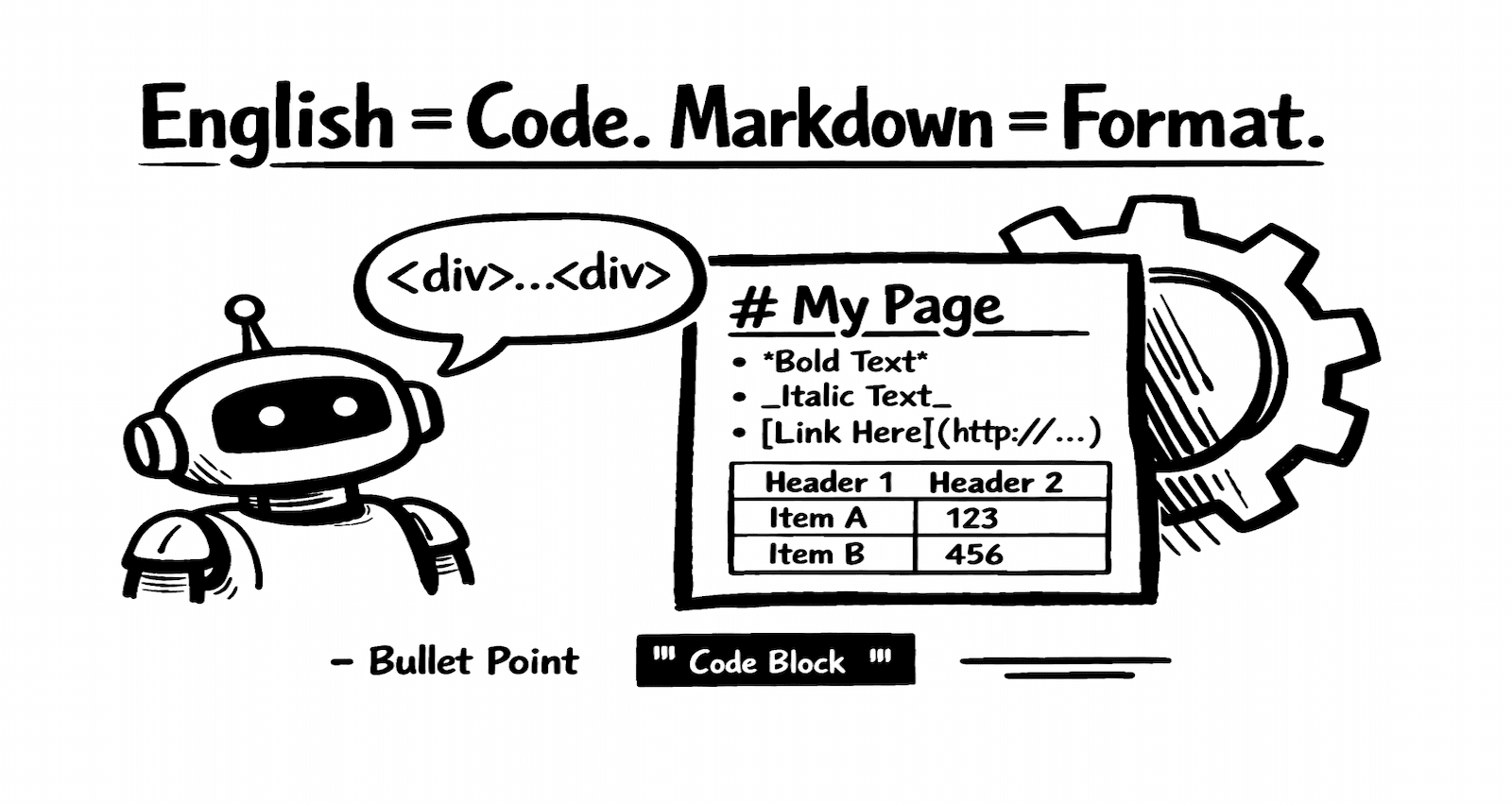

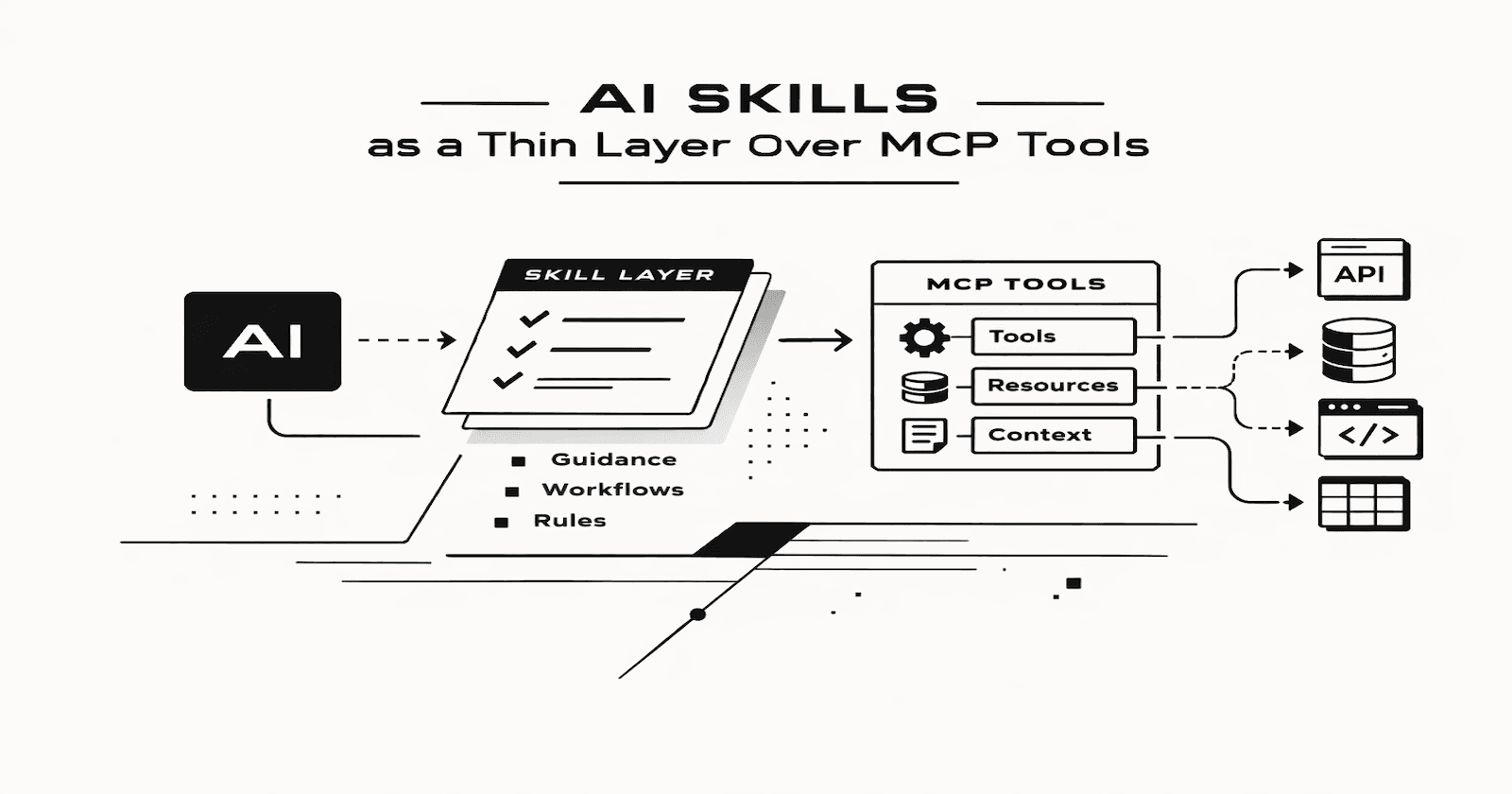

Before reviewing the examples, it’s important to understand how the SQLcl MCP Server interacts with the Large Language Model (LLM).

When you type a prompt in Copilot Chat or Codex, VS Code sends that prompt, along with prior context and a list of available tools, to the LLM. One of those tools can be the SQLcl MCP Server.

The LLM analyzes the prompt and determines whether SQLcl is the appropriate tool to assist in fulfilling the request. If it is, the LLM instructs VS Code to run a command through SQLcl and return the output. The LLM then uses that output to decide the next step, continuing this exchange until the request is resolved.

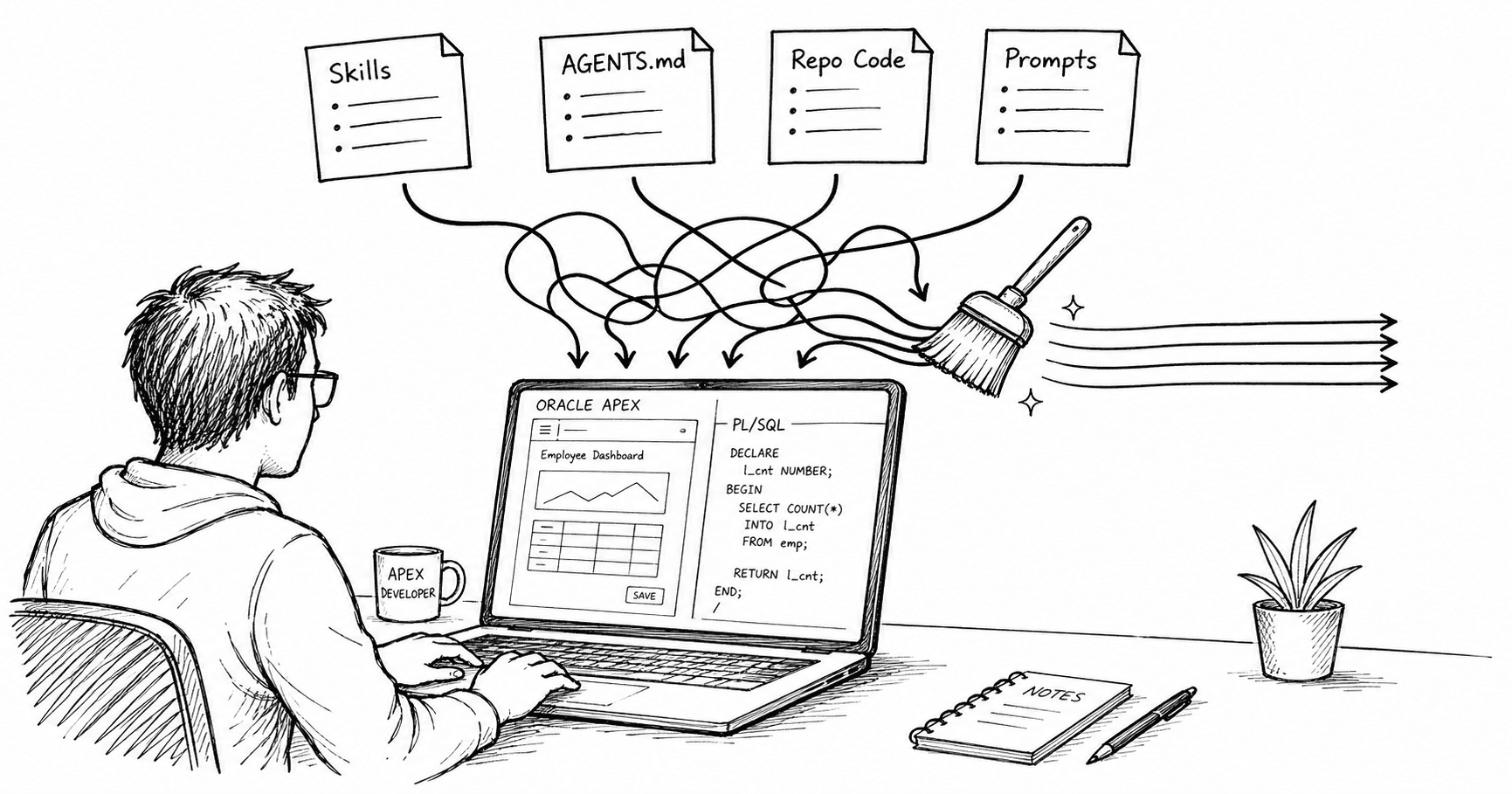

AGENTS.md

Adding a file called AGENTS.md to the root of your GitHub repositories allows you to pass on guidelines to the LLM. Both Codex and GitHub Copilot recognize this file. You can include details such as:

APEX and DB Versions

Folder Structures of your Repo

Coding Standards and Conventions

Formatting Rules

Agent behaviors and guidelines (don’t drop my tables)

SQLcl MCP Use Cases

Now that we are set up, it’s time to go through some use cases. I think this is the best way to understand why the SQLcl MCP server is so helpful.

Create a Query

Let’s start by having the MCP Server create a query for us to use.

Result:

SELECT so.order_number

, so.order_date

, so.total_amount AS order_total

, so.status AS order_status

, c.name AS customer_name

, c.email AS customer_email

, sol.line_number

, sol.quantity

, sol.unit_price

, sol.line_total

, p.sku

, p.name AS product_name

FROM sales_orders so

, customers c

, sales_order_lines sol

, products p

WHERE so.customer_id = c.customer_id

AND sol.order_id = so.order_id

AND sol.product_id = p.product_id;

The first thing you may notice is that the SQL is formatted. This is because of the formatting instructions in the AGENTS.md file. It also uses the old Oracle SQL syntax, again, because this is in my AGENTS.md (and because I am old 👴).

The LLM requested details of the tables in the database, SQLcl provided them, and the LLM generated the query. It correctly identified the tables based on my prompt, even though I did not use exact table names.

Tune the Query

Now that we have a query, let’s tune it for optimal performance when we want to view orders with an order date within the past month.

Here is an excerpt from the LLM’s “model reasoning trace” and its back and forth with SQLcl:

Here is the final response from the LLM:

Tuning Results

Captured the original plan: full scans on SALES_ORDERS and SALES_ORDER_LINES, cost 12 (plan hash 1363380163), so the date filter wasn’t selective without supporting indexes.

Added index sales_orders_ord_dt_idx on (order_date, order_id) to allow the optimizer to apply the last-month filter via an index range scan.

Added index sales_order_lines_ord_idx on (order_id, product_id) so line lookups now use range scans instead of scanning the entire detail table.

Regathered stats on both tables (DBMS_STATS.GATHER_TABLE_STATS) to make the new access paths visible to the optimizer.

New plan hash 1931885458: both SALES_ORDERS and SALES_ORDER_LINES now use index range scans with batched rowid lookups; plan cost dropped to 9, and the query no longer performs storage full scans.

Executed the tuned SQL (ANSI JOIN form) and confirmed it returns no rows for the past month in this sample dataset, indicating the plan runs without error.

Next Steps

Validate the change against production-scale volumes (SQL Monitor/ASH) to quantify real runtime gains.

Keep table stats current after large data loads so the optimizer continues to choose the new indexes.

Combining Context from Your DB and Your Codebase

Sometimes it is helpful to ask questions about your codebase in GitHub and what is actually in your database.

I have a folder open in VS Code with a GitHub Repo:

Now, I can ask questions that span the DB and my file system.

Here is an excerpt from the “model reasoning trace”:

Here is the Response:

Connected to the DEMO database via SQLcl and cross-referenced every SQL/PL/SQL source in the repo. The query select distinct table_name from all_tables where table_name in (...) returned twelve matches, and each has live references in the codebase:

GenAI Schema Tables

GEN_AI_CONFIGS – defined in cn_utils/ai/TABLES/GEN_AI_CONFIGS_CRTB.sql:1, used in cn_utils/ai/PLSQL/GEN_AI_UTL_PKB.sql:18.

GEN_AI_LOGS – defined in cn_utils/ai/TABLES/GEN_AI_LOGS_CRTB.sql:2, logged through cn_utils/ai/PLSQL/GEN_AI_UTL_PKB.sql:8.

GEN_AI_TOOLS – created in cn_utils/ai/TABLES/GEN_AI_TOOLS_CRTB.sql:1, referenced by cn_utils/ai/PLSQL/GEN_AI_TOOLS_UTL_PKB.sql:1.

GEN_AI_TOOL_PARAMS – documented in cn_utils/ai/TABLES/GEN_AI_TOOL_PARAMS_CRTB.sql:1, queried in cn_utils/ai/PLSQL/GEN_AI_TOOLS_UTL_PKB.sql:66.

SALES_ORDERS – DDL/comments in cn_utils/ai/SELECTAI/SELECT_AI_DEMO.sql:19, accessed in cn_utils/ai/PLSQL/GEN_AI_EXAMPLE_TOOLS_PKB.sql:26.

CUSTOMERS – table metadata in cn_utils/ai/SELECTAI/SELECT_AI_DEMO.sql:28, queried in conference_material/KSCOPE25/Built with LOV Using APEX/f777.sql:7176.

Content & Reference Tables

CNBA_BLOG_POSTS – synchronized in cn_utils/ai_demo_app/APEX/f110.sql:334, selected in cn_utils/ai_demo_app/PLSQL/CN_AI_UTL_PKB.sql:36.

CNBA_POST_CHUNKS – inserted in cn_utils/ai_demo_app/APEX/f110.sql:2765, joined in cn_utils/ai_demo_app/PLSQL/CN_AI_UTL_PKB.sql:88.

CN_DOCUMENTS – queried in cn_utils/ai_demo_app/APEX/f110.sql:1580, retrieved in cn_utils/ai_demo_app/PLSQL/CN_AI_UTL_PKB.sql:176.

CN_DOCUMENT_CHUNKS – read in cn_utils/ai_demo_app/APEX/f110.sql:3860, maintained in cn_utils/ai_demo_app/PLSQL/CN_AI_UTL_PKB.sql:252.

PRODUCTS – LOV definition in cn_utils/ai_demo_app/APEX/f110.sql:1572, included in cn_utils/ai/SELECTAI/SELECT_AI_DEMO.sql:10.

COUNTRIES – created in conference_material/KSCOPE25/Built with LOV Using APEX/countries.sql:2, queried in conference_material/KSCOPE25/Built with LOV Using APEX/f777.sql:2462.

Other names appeared in the source scan but are not tables in the DEMO schema (e.g., views like CNDEMO_ADDRESSES_V or package calls such as TABLE_FN_PK.EVS_SOLD_BY_YEAR). Let me know if you want to repeat the check against another connection or extend it to views/synonyms.

Full Agent Mode

Q&A questions are great, but can we create code in the DB? Let’s try!

Notice the table names are not quite right. I have orders instead of sales_orders and order_lines instead of sales_order_lines.

Here is an excerpt from the “model reasoning trace”:

Here is the response:

Created table APIs for customers, orders, order lines, and products in DEMO so each table now has consistent insert/update/delete/fetch routines with APEX debug logging and error propagation.

AIDEMO_CUSTOMERS_PK uses customers%ROWTYPE for CRUD, returning generated IDs and logging apex_debug.error on failure.

AIDEMO_PRODUCTS_PK mirrors the same pattern for PRODUCTS, defaulting created_at to SYSDATE when absent.

AIDEMO_ORDERS_PK targets SALES_ORDERS (no plain ORDERS object exists) and fills defaults for order_date, total_amount, and status during inserts.

AIDEMO_ORDER_LINES_PK targets SALES_ORDER_LINES, recalculating line_total when not provided.

Verified all specs/bodies compile cleanly (USER_OBJECTS shows each package and body VALID).

Next steps:

Exercise the new TAPIs from your APEX flows or unit harness to confirm business logic/defaults behave as expected.

If you need deployment artifacts, export these CREATE OR REPLACE statements into your /SCRIPTS folder for source control.

It figured out the correct tables and created a create/update/delete package for each table:

A few notes:

- It included the

apex_debugcall in the WHEN OTHERS exception, which I had specified in my AGENTS.md

What about APEX?

Because APEX lives in the database, it is an obvious candidate for interrogation by LLMs.

Example response (not my apps 😊):

Application 103 has 45 pages where PAGE_ACCESS_PROTECTION = 'Unrestricted' (apex_application_pages), leaving session state open to tampering; switch these pages to checksum-protected or require re-authentication. 🫢

Browser caching remains enabled for application 103 (BROWSER_CACHE = 'Enabled'), risking sensitive data leakage on shared machines; disable caching for authenticated apps.

Example response (again, not my apps 😊):

apex_release.version_no reports 24.2.9 as the current APEX version; four applications (100, 101, 102, 110) already use compatibility_mode = '24.2' and Universal Theme 24.2 (apex_application_themes).

Application 103 is still on compatibility_mode = '4.2' 🫢 (apex_applications), so its runtime features are constrained to an obsolete APEX release.

The same application 103 runs Universal Theme version 1.2 with file_prefix = '#IMAGE_PREFIX#themes/theme_42/1.2/' (apex_application_themes), which predates the current 24.2 delivery.

Other Prompts

Identify tables in the DEMO schema that are missing foreign keys. Add each missing foreign key to a script called MISSING_FOREIGN_KEYS.sql for my review.

- Not only did the LLM do a good job of identifying missing foreign keys and creating the respective ALTER TABLE scripts, but it also identified orphaned records in one table!

Review database objects in the DEMO schema and files in my codebase to identify unused tables. Create a script called POTENTIAL_TABLE_DROPS.sql with DROP statements for each. Do not execute the drop statements.

Look at SQL queries that have run in the DEMO schema and list the top three poorly performing SQL statements. You may need to run this as a privileged user.

You are an expert technical author with specialist knowledge in Oracle APEX, Oracle Database, PL/SQL, and SQL. You have been assigned to the ‘XYZ project’. The goal of the project is to migrate from a legacy APEX version to the latest version (24.2) while enhancing security and adding the required business functionality. Connect to the 'XYZ' connection using the sqlcl mcp server. Review all of the database objects and APEX applications in the ‘XYX’ schema. Your goal is to create high-quality, easy-to-read, and concise technical documentation for the support team that will take over support for the application. Create a document called TECHICAL_DESIGN.md in the DOCS folder.

Other Considerations

Ensure the LLM and SQLcl are connected to the correct instance and schema. This is especially important if, like me, you have numerous saved database connections in SQL Developer. Because the LLM is deciding what command to run in SQLcl, it can easily pick the wrong connection. It helps to have clear connection names that differ between clients and instances, e.g., ABCCORP-DEV and CLOUDNUEVA-DEV, as opposed to DEV1 and DEV2.

I recommend creating a read-only schema in non-development instances.

For complex questions, the number of iterations between the LLM and SQLcl can be large. Some questions can take multiple minutes to answer and require a significant number of tokens. 💲

I find it interesting to review both the plan that the LLM generates at the start of the process and the “model reasoning trace” it emits as it goes through the process of answering your question. This helps me to build better prompts.

As models evolve (GPT3 > GPT4 > GPT5), the prompts you enter today may not yield the same results tomorrow. As with any AI technology, it is important to build a list of Evals that you can use to test new models and prompts against results from previous iterations.

If you do not already, add rich and informative table and column comments. I laid out why this is important in my post about SELECT AI.

Conclusion

In the end, the SQLcl MCP server isn’t magic 🪄; it’s just a bridge between your database and an LLM. It saves time, reduces context switching, and helps you think through SQL and APEX problems more efficiently. It won’t replace your judgment or stop you from making a bad call, but it can make routine work faster and more consistent.

Appendix 1 - Configure & Test GitHub Copilot with SQLcl MCP Server

Here is a guide to get started with GitHub Copilot in VS Code.

Install GitHub Copilot Extensions for VS Code

- Install the GitHub Copilot and GitHub Copilot Chat Extensions

- Log in using your GitHub account

Configure Copilot to use the SQLcl MCP Server

Open the VS Code Command Palette.

Type MCP to see the MCP Options

- Select MCP: Add Server

- Select Command (stdio)

- Enter a name for the MCP Server

- Select which scope you want it to run in:

- You should now see the mcp.json file

- Now that the SQLcl MCP Server is installed, you can start/stop/restart it by opening the menu and selecting > MCP: List Servers

- Then select SQLcl

- Select ‘Start Server’ to start the MCP Server. The same navigation will allow you to stop an already running MCP Server.

- Click ‘Show Output’ to see a log of what it is doing.

Note: VS Code will start the SQLcl MCP Server for you if it is not already started.

Test from VS Code

Open the CoPilot Chat Extension and type the following in the chat Window:

Connect to DEMO using the SQLcl MCP Server